OBIEE High Availability - The BI Server

At the beginning of the month, the majority of the Rittman Mead Consulting team was at the UKOUG conference in Birmingham. Jon Mead and myself presented a paper called "OBIEE High Availability". What we discussed was the different components of the Oracle BI EE stack, how they can be clustered and some of the main features of such a setup.

The OBIEE architecture, in it's simplest form, is comprised of the Oracle BI Server, Oracle BI Presentation Server, Oracle BI Java Host and the J2EE container that runs the web application (let's just call that bit OC4J , though it doesn't have to be hosted in an Oracle Container 4 Java). Behind the Oracle BI Server we might expect to find several different data sources, but clustering those is well outside the scope of this write-up.

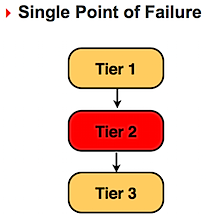

In your regular installation we always have a single point of failure. If any one server goes down or looses network connectivity then we have lost all service. Making each node redundant we reduce the risk of a single point bringing down the whole stack

Setting the BI Server to a clustered mode

The clustering of a BI server and the cluster controller are configured in the NQSConfig.INI and NQClusterConfig.INI files, found in the ORACLEBI/server/Config/ directory. Once you have installed the BI server on all the dedicated servers, you need to configure each and every node to join the cluster we are creating. One server must be chosen to be the primary cluster control. This server will be responsible for receiving connection requests to the cluster, and forward that request down to one of the servers participating in the cluster. We can also configure a secondary cluster controller, to act as a backup if the master goes down. If you don't bother setting up a secondary cluster controller, you have introduced a single point of failure.

Here are some of the main configuration parameters that need to be set in the NQSConfig.INI file

- CLUSTER_PARTICIPANT = YES;

- REPOSITORY_PUBLISHING_DIRECTORY = "/media/share/Repository";

- REQUIRE_PUBLISHING_DIRECTORY = YES;

The first one tells the BI server to look in to the NQClusterConfig.INI file for further information on how to connect to a cluster. The second parameter points to a shared directory on the network, where all BI Servers in the cluster must be able to find the common repository and write back any modifications. The third parameter tells the BI server that it should not be able to start if the Publishing Directory can not be found.

The configuration of the Cluster Controller and the BI Server clustering is set in the NQClusterConfig.INI file. The Cluster Control must be enabled and then we must list the primary (and, optionally) the secondary cluster controllers. Each node must also know about all the BI servers in the cluster, and which server is dedicated to contain the master copy of the repository.

- ENABLE_CONTROLLER = YES;

- PRIMARY_CONTROLLER = "aravis2.rmcvm.com";

- SECONDARY_CONTROLLER = "aravis3.rmcvm.com";

- SERVERS = "aravis2.rmcvm.com","aravis3.rmcvm.com";

- MASTER_SERVER = "aravis2.rmcvm.com";

The next step is top copy the .RPD file in to the /media/share/Repository mount point. (If you are setting this up on Windows machines, then you must share a drive on the network and refer to the share something like this: REPOSITORY_PUBLISHING_DIRECTORY="aravis0\\share\Repository")

In our setting here, we have one BI server and the Primary Cluster Controller running on aravis2 and a second BI server and the Secondary Cluster controller running on aravis3. If we now start the Cluster Controller on aravis1, we see the following

[oracle@aravis2 setup]$ ./run-ccs.sh start Oracle BI Cluster Controller startup initiated. Execute the following command to check the Oracle BI Cluster Controller logfile and see if it started. tail -f /u01/app/oracle/product/obiee/OracleBI/server/Log/NQCluster.log [oracle@aravis2 setup]$ tail -f ../server/Log/NQCluster.log [71030] A connection with Cluster Controller aravis3.rmcvm.com:9706 was established. 2008-12-21 15:43:59 [71020] A connection with Oracle BI Server aravis3.rmcvm.com:9703 was established. 2008-12-21 15:43:59 [71010] Oracle BI Server aravis3.rmcvm.com:9703 has transitioned to ONLINE state. 2008-12-21 15:43:59 [71027] Cluster Controller aravis3.rmcvm.com:9706 has transitioned to ONLINE state.

Now make sure that the cluster controllers and BI servers are up and running on both instances.

Connecting to the BI Cluster

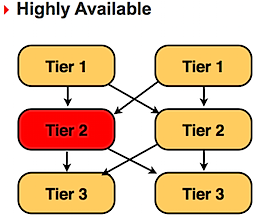

The Oracle BI ODBC driver is configured, by default, to connect to a regular non-clustered instance. Now that the Primary Cluster Controller is responsible for all connections, we need to configure a new DSN for the Administrator tool.

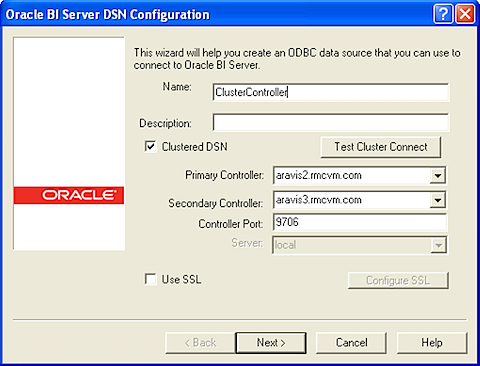

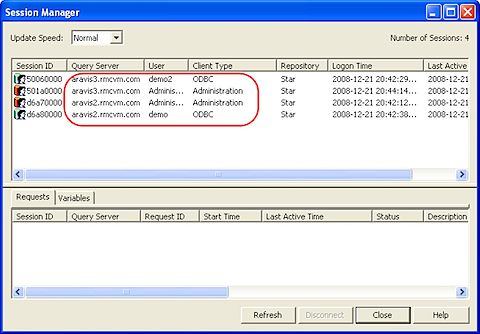

If we now start the Administrator tool and connect to the newly created ClusterController connection, we should see something like the following, if we start up the Cluster Manager Tool. Here we can see the state of each BI server and cluster controller.

Once we have established that the BI servers are both members of the cluster and the primary and secondary cluster controllers are running we can move on and change the ODBC connection for the Presentation Server. For the time being we are satisfied that the Presentation Server is a potential single point of failure. My Presentation Server is called aravis1.rmcvm.com. I now must edit the odbc.ini file, that we find in the ORACLEBI/setup/ directory. This configuration file defines all ODBC connections to the BI server, and now we are interested in creating a similar connection as we did above, for the Administration tool

[AnalyticsWeb_Cluster] Driver=/u01/app/oracle/product/obiee/OracleBI/server/Bin/libnqsodbc.so Description=Oracle BI Server ServerMachine=local Repository= FinalTimeOutForContactingCCS=60 InitialTimeOutForContactingPrimaryCCS=5 IsClusteredDSN=Yes Catalog= UID=Administrator PWD= Port=9703 PrimaryCCS=aravis2.rmcvm.com PrimaryCCSPort=9706 SecondaryCCS=aravis3.rmcvm.com SecondaryCCSPort=9706 Regional=NoThe Presentation Server then needs to be configured to use this ODBC connection, called AnalyticsWeb_Cluster, instead of the default AnalyticsWeb. Edit the ORACLEBIDATA/web/config/instanceconfig.xml file and change the ODBC connection name in the DSN tags

<WebConfig>

<ServerInstance>

<DSN>AnalyticsWeb_Cluster</DSN>

<CatalogPath>/media/share/WebCat/catalog/samplesales</CatalogPath>

Restart the Presentation server and you should be ready to go. Log in to the Analytics web application and monitor, using the Administration tool, and see how you are now, in a round robin manner, get sessions created on each BI server.

Next we look at how to set up multiple presentation services to make that layer more fault tolerant , as well as putting the Java Host and Scheduler in to the cluster.