Big Data and the Oracle Reference Architecture.

Last week I travelled from Europe to present at the RMOUG Training Days event In Denver, Colorado. As I blogged a couple of weeks back, this is one of my favourite user group conferences and it never fails to impress me. I expect to be wowed even more next year as they clock up their quarter century!

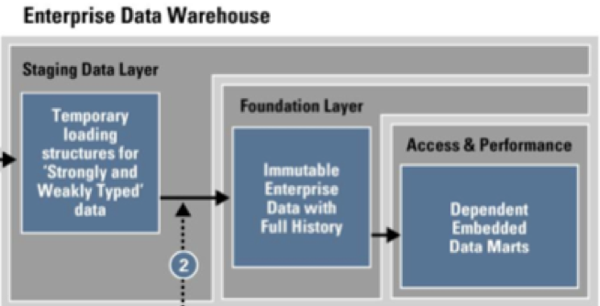

This year I presented twice: "Realtime Data Warehouse Tuning" and "Extending the Oracle Reference Data Architecture. More on tuning in another blog posting, but for now some thoughts on Big Data and Architecture. If you are interested in seeing my paper it is available on the Rittman Mead website here. The slides originally published on the RMOUG conference website were revised to incorporate some new graphics and information from Oracle's white paper on Information Management and Big Data A Reference Architecture which was published just as I flew out to the USA. Fortunately, most of my original paper matched the thinking behind the new white paper. One change of note is the new description for the foundation layer - it is now "Immutable Enterprise Data with Full History" - see the image clipped from Oracle's new white paper.

I think the new definition is a far more clear description of the Foundation Layer than using terms such as 3NF. After all architecture is more about reasons to do rather than how to do.

One of my early slides in the Big Data section of my RMOUG talk covers what I think Big Data means. Ask a dozen people what is meant by "Big Data" and you will probably hear at least two dozen answers. What is that stops data warehouses being "big data", since they are both big (they can be really big) and contain data?

Some people argue that big data data is unstructured data. However, to my mind for data to be useful information it must have some degree of discernible structure, that is, it must be capable of being analysed or else it is just noise. Obviously, text has structure and meaning both from the ordering of characters to form words and the order of words to make coherent blocks of text. Likewise, digital data from from telemetry also has meaning. Harder to analyse are audio and video feeds, but even this is becoming commonplace both in the consumer marketplace and business; I have apps on my Macbook that tag photos based on who the software believes is in the picture and apps on my iPhone that identify and download music from an audio clip recorded on the phone. Business and government users do the similar things be it identify people in a crowd or to transcribe voice.

People often speak of the 4 or 5 "V"s of big data:

- Volume - large amounts of data

- Velocity - fast arriving data

- Variety - it comes in all types of structure (including "no" structure)

- Value - there is "worth" in processing the information

As you see, I struggle with some of the usual definitions of big data as opposed to large data sets. For me, the key to Big Data is what we intend to do with it. If it is important to know the exact value of a single item (for example billing from smart metering) then it is not big data, it is instead large volume transactional data. If the exact value of a single data item is less important than deriving a statistical picture of the whole data set we are in the realms of big data; if we lose a single record it is probably not crucial to our analysis, if we fail to send a short validity coupon to someone's smartphone as they walk past our store (or better yet as they pass the competitor's store at the other end of the same shopping mall) then it probably does not matter.