Does the BI Server System Component Make Full Use of My Multi-Socket, Multi-Core Server?

An interesting question came up during a client visit the other week, where their system had some performance issues and I was asked to take a look at it for them. One of their concerns was whether OBIEE 11g, in particular the BI Server system component within it, was making full use of the CPUs and cores on their server - in particular, they'd noticed just one or two CPUs at near 100% utilisation when certain queries were running, even though there were many, many more CPUs available that the BI Server could use. What was going on, and was there an in-built limitation with the BI Server where it serialised everything through a single CPU when many more were sitting idle?

Thinking the answer through, it's quite an interesting topic and there's actually several aspects to how the BI Server works in a multi-socket, multi-core environment. To take an example; consider a situation where OBIEE 11g is running on a server that's got four sockets, each with four cores in it, for a total of sixteen cores. Most modern OSs will consider this as sixteen CPUs, which the processor count that the Oracle database, OBIEE and other applications will consider as available.

These CPUs also generally support something called multi-threading; threads are sequences of operations owned by a process (such as nqsserver, the BI Server OS executable) that an operating system executes via a scheduler and some sort of time-sharing/multi-plexing arrangement, depending on the OS and hardware. All applications and services that run on a server that uses these features will benefit from multi-threading in that they can appear to run at the same time as other applications, but those that benefit the most are ones that themselves internally support multi-threading, and can therefore initiate several tasks at once - and one such application is the BI Server system component.

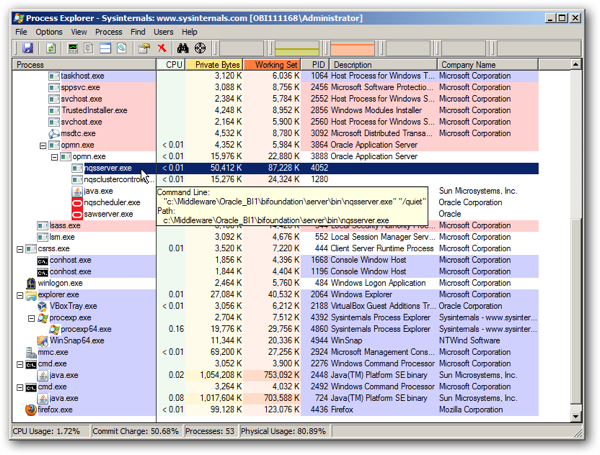

What this means in practice is that when the BI Server component starts up, it creates and reserves a number of threads in advance, determined by a number of parameters including SERVER_THREAD_RANGE. As queries come in from the BI Presentation Server, the BI Server component uses these threads to issue queries to the underlying source database, manage client connections, communicate with database servers and so forth. You can see these threads running and ready to perform tasks for the BI Server component by using a tool such as Process Explorer for Windows; in the screenshot below, you can see the single BI Server process (nqsserver.exe) running as a child process of opmn.exe, the Oracle Process Manager and Notification Server that manages OBIEE's legacy non-Java components.

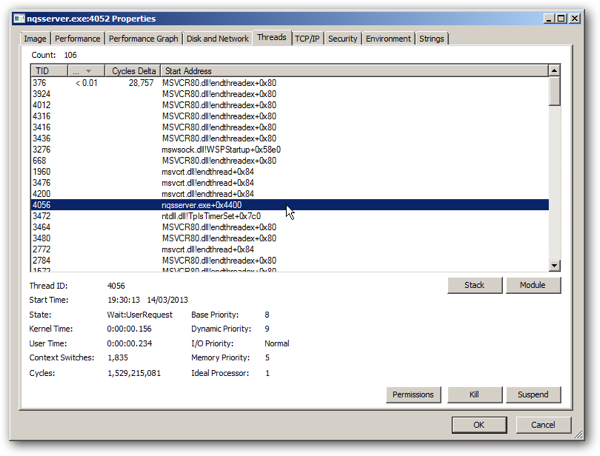

Double-clicking on that process shows you the threads associated with it; in this case, even for a largely unused system there are over a hundred threads open, ready to handle queries and perform other management and communication tasks.

So going back to the original questions; for a given end-user query, will the BI Server execute everything on a single CPU, or will it make use of all of the CPUs on the server? Well, given that the BI Server is multi-threaded, what this question is really asking is whether all of the BI Server's threads run on a single CPU, or whether all of the threads associated with a given query run on a single CPU, effectively ignoring much of the CPU capacity on the server.

Thinking it through a bit, any given single query is, to a certain extent, only really going to use a small part of the total amount of CPUs available on a server, because it's not the BI Server that runs queries in parallel, it's the underlying database. For example, a single analysis against a single Oracle Database datasource would only really need a single BI Server thread to handle the query request, but when the underlying database receives the query, it might use a large number of its CPUs to process the query, returning results back to the BI Server to then pass back to the Presentation Server for display to the user. The BI Server wouldn't have any use for any more query threads, as it can't really do anything with them - the exception to this being queries that generate multiple physical SQLs, for example to join data from multiple sources together and return a single set of data to the user, for which the BI Server could benefit from a higher CPU count if each of these queries in turn led to lots of threads being used - but two queries, in themselves, don't neccessarily require two CPUs, because of course the BI Server, and the underlying CPUs, are themselves multi-threaded.

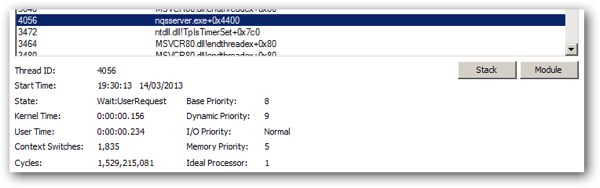

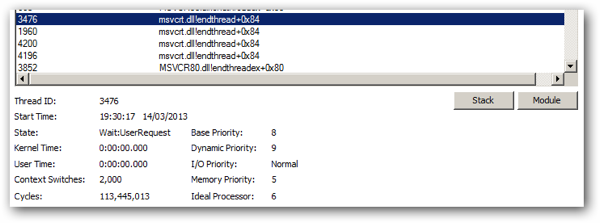

So let's go back to Windows and see what happens when individual queries come in. The Threads view in Process Explorer actually displays, for each thread, the CPU (processor) that it is most likely to next run on, as you can see from the screenshot below:

Looking at the threads at the top of the list (those that have run most recently), see how the Ideal Processor value is now 6 - this this an eight-processor Windows Server 2008 64-bit server it's running on.

So why does it use the term Ideal Processor, rather than just Processor? In fact, which CPU a thread runs on (or, technically, is scheduled to run on) is actually determined by the OS and is effectively "abstracted" away from individual processes, as long as are designed for multi-threading and don't have any serialised single-threaded limitations. This is how the BI Server is written, with no explicit code to spread threads over more than one CPU, but with the OS doing this for it instead based on a number of factors. It's also where there's that annoyingly vague set of guidelines around whether it's best to vertically-scale the BI Server component on a multi-processor 64-bit system - some OSs and hardware platforms might, in the background, limit processes to a subset of the available CPUs, some might not, so therefore the vague guidelines that it "might" be worth adding more BI Server components into an Oracle Instance, just as it "might" make better use of overall memory - you'll have to try it on your own system and see.

To conclude then - all things begin equal, the BI Server should make use of all of the CPUs that the underlying operating system presents to it, with the OS itself deciding what threads are scheduled against which CPUs. In-theory, all CPUs on the server are available to each BI Server component, but each OS is different and it might be worth experimenting if you're sure that certain CPUs aren't being used - but this is most probably unlikely and the main reason you'd really consider vertical scale-out of BI Server components is for fault-tolerance, or if you're using a 32-bit OS and each process can only see a subset of the total overall memory. And, bear in mind that however many CPUs the BI Server has available to it, for queries that send just a single SQL statement down to the underlying database server, adding more CPUs or faster CPUs isn't going to help as only a single (or so) thread will be needed to send the query from the BI Server to the database, and it's the database that's doing all of the work - all that this would help with is compilation and post-aggregation work, and enabling the server to handle a higher number of concurrent users. Invest in a better underlying database instead, sort out your data model, and make sure your data source back-end is as optimised as possible.