Optimizing TimesTen for Exalytics Queries using TimesTen's Index Advisor

Late last week I posted an article on our blog on loading detail-level data into Exalytics; whilst the article was intended really as an introduction to Essbase Aggregate Storage Option, and how it could offer an alternative to TimesTen when storing large amounts of detail-level data, to make the case for ASO I needed to show how using TimesTen for this type of scenario might be an issue. TimesTen within the context of Exalytics is really positioned as an aggregate cache, with the Summary Advisor automatically recommending and storing aggregates in TimesTen, and this is a great use-case for it; but as I showed in the article (and as others have also evidenced) TimesTen can start to slow-down when you've got large amounts of data being returned by the query, as it doesn't currently use common VLDB features like partitioning and parallel query to help speed-up queries.

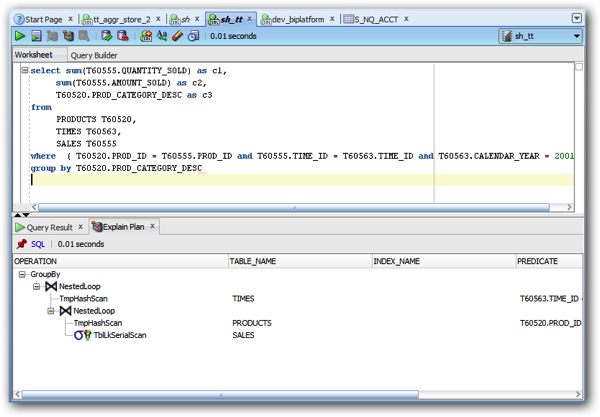

But there was a crucial step I missed-out when preparing the TimesTen datasets for querying. Whilst the ttimportfromOracle utility creates indexes for the TimeTen tables it's creating, it creates them based on the ones on the corresponding Oracle tables, which isn't a bad place to start from, but might not suit either TimesTen (which has its own, memory-optimised indexes) or the particular queries you're then firing at it. To take an example; in the query that I ran agains the Sales History data in TimesTen in the previous article, the execution plan showed two TmpHashScan operations, like this:

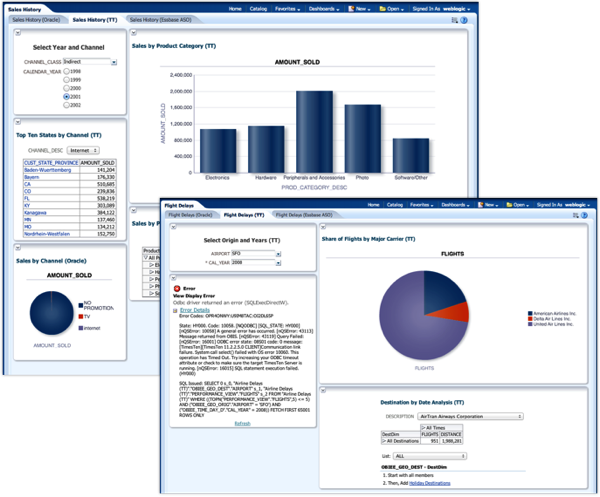

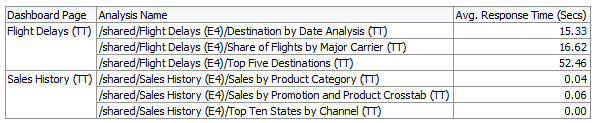

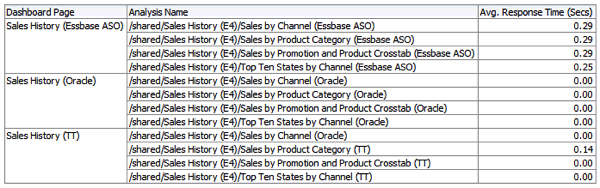

Now TmpHashScan operations actually involve TimesTen creating temporary indexes in the background, to speed up the query but which of course add to the execution time of the query. If instead though, we'd created those indexes beforehand, we wouldn't incur that hit during the actual query, and the response time would go down. Now to be fair to the Oracle database source, and the Essbase ASO source, there are also query optimisation steps that we could perform for those ones too, but let's look at what happens if we run an index optimisation process on the TimesTen datasets - Sales History, with around 900k rows of fact table data, and Flight Delays, with around 120m rows of data - as analysed in the two dashboards below (and notice how the Flight Delays one even has an analysis that times-out, such is the performance issue with the data "as is"):

Looking at the current response times of the analyses on these two dashboards, you can see that the Sales History queries return data instantaneously, whereas the Airline Delays ones have much longer response times - anything from 15 seconds on average up to a minute - and that minute is the query timeout.

Now there's actually a utility within TimesTen that helps with index optimisation called the "Index Advisor". How this works is that you enable it via the ttisql command-line utility, then run a bunch of queries to generate a workload, then run the utility again to generate recommendations based on table scans, joins, sorts and grouping operations within the queries. When capturing the workload, you can either do so for just that ttisql session, or for the whole database, which would make more sense in an Exalytics-type environment. So let's enable this utility, switch over to the dashboard and run some queries, and see what comes out.

C:\Users\Administrator>ttisql "DSN=tt_exalytics_3"

Copyright (c) 1996, 2013, Oracle and/or its affiliates. All rights reserved. Type ? or "help" for help, type "exit" to quit ttIsql.

connect "DSN=tt_exalytics_3"; Connection successful: DSN=tt_exalytics_3;UID=Administrator;DataStore=C:\TimesTen\tt_data_3;DatabaseCharacterSet=AL32UTF8;ConnectionCharacterSet=US7ASCII;DRIVER=C:\TimesTen\TT1122~2\bin\ttdv1122.dll;LogDir=C:\TimesTen\tt_data_3;PermSize=9000;TempSize=2000;LockWait=600010.0;SQLQueryTimeout=600000;TypeMode=0;QueryThreshold=600000;PLSCOPE_SETTINGS=IDENTIFIERS:NONE;RangeIndexType=1;(Default setting AutoCommit=1)

Command> call ttindexAdviceCaptureStart(1,0);

I then run some queries on the TimesTen Sales History dashboard, filtering on year and channel class, and then after a while go back to ttisql to stop the capture process, and then output the index advice.

Command> call ttindexAdviceCaptureEnd(1);

Command> call ttindexAdviceCaptureOutput(1);

< 33, create hash index SALES_i6 on SH.SALES(CHANNEL_ID); >

< 2, create hash index SALES_i10 on SH.SALES(PROD_ID); >

< 1, create unique hash index SALES_i12 on SH.SALES(TIME_ID); >

< 1, create hash index SALES_i12 on SH.SALES(TIME_ID); >

< 16, create hash index PRODUCTS_i7 on SH.PRODUCTS(PROD_ID,PROD_CATEGORY); >

< 1, create hash index PRODUCTS_i13 on SH.PRODUCTS(PROD_ID,PROD_SUBCATEGORY); >

< 35, create hash index TIMES_i8 on SH.TIMES(TIME_ID,CALENDAR_YEAR); >

< 1, create index TIMES_i11 on SH.TIMES(CALENDAR_YEAR); >

< 8, create unique hash index CUSTOMERS_i9 on SH.CUSTOMERS(CUST_ID); >

< 1, create hash index CUSTOMERS_i9 on SH.CUSTOMERS(CUST_ID); >

10 rows found.

Command>

I then do the same whilst running some queries against the Airline Delays (130m+ rows) dataset, and get the following output:

Command> call ttindexAdviceCaptureStart(1,0);

Command> call ttindexAdviceCaptureEnd(1);

Command> call ttindexAdviceCaptureOutput(1);

< 20, create index OBIEE_GEO_ORIG_i14 on BI_AIRLINES.OBIEE_GEO_ORIG(AIRPORT); >

< 12, create hash index OBIEE_GEO_ORIG_i19 on BI_AIRLINES.OBIEE_GEO_ORIG(AIRPORT); >

< 8, create unique index PERFORMANCE_VIEW_i15 on BI_AIRLINES.PERFORMANCE_VIEW(DEST); >

< 1, create index PERFORMANCE_VIEW_i15 on BI_AIRLINES.PERFORMANCE_VIEW(DEST); >

< 20, create unique hash index PERFORMANCE_VIEW_i16 on BI_AIRLINES.PERFORMANCE_VIEW(DEST); >

< 1, create hash index PERFORMANCE_VIEW_i16 on BI_AIRLINES.PERFORMANCE_VIEW(DEST); >

< 13, create unique hash index UNIQUE_CARRIERS_i17 on BI_AIRLINES.UNIQUE_CARRIERS(CODE); >

< 1, create hash index UNIQUE_CARRIERS_i17 on BI_AIRLINES.UNIQUE_CARRIERS(CODE); >

< 4, create unique hash index OBIEE_TIME_DAY_D_i18 on BI_AIRLINES.OBIEE_TIME_DAY_D(CALENDAR_DATE,CAL_YEAR); >

< 10, create hash index OBIEE_TIME_DAY_D_i18 on BI_AIRLINES.OBIEE_TIME_DAY_D(CALENDAR_DATE,CAL_YEAR); >

< 5, create unique hash index OBIEE_TIME_DAY_D_i20 on BI_AIRLINES.OBIEE_TIME_DAY_D(CALENDAR_DATE); >

< 1, create hash index OBIEE_TIME_DAY_D_i20 on BI_AIRLINES.OBIEE_TIME_DAY_D(CALENDAR_DATE); >

12 rows found.

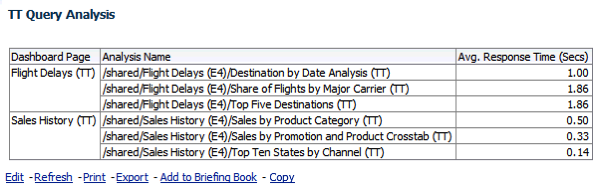

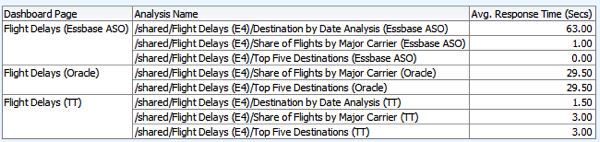

So that's 10 indexes recommended for the Sales History dataset, and 12 recommended for the Flight delays one. I therefore go back to the ttisql command line and execute each of the index creation commands, and then run the two dashboards again. And the performance improvement is significant:

Recommendation from Oracle is to run this process over-and-over as more recommendations could come out, so I repeat the process to see if this is the case.

Command> call ttindexAdviceCaptureStart(1,0);

Command> call ttindexAdviceCaptureEnd(1);

Command> call ttindexAdviceCaptureOutput(0);

0 rows found.

So no more recommendations then, and a much-improved query response time from TimesTen. But how does this stack-up against the plain Oracle database source, and Essbase ASO? For the Sales History queries, all sources perform well now, with nothing really in it between the three sources, except that the Essbase source is now actually the slowest (if only by half a second or so).

With the Airline Delays dashboards though, TimesTen is now a winner, with sub-second response times even across 130m+ rows, compared to many seconds for the other sources.

I therefore stand corrected on TimesTen not being suitable for query large sets of large, sparse data, AS LONG as you run the Index Advisor after you've run a representative workload (and I even forgot to re-gather stats after generating the new indexes, which would probably have improved things even more). So, on that basis, do I take back my recommendation to consider Essbase ASO for these large, sparse datasets? Well, to be honest - I've not done any optimisation on Essbase yet, or used the Summary Advisor on the plain Oracle source, so it looks like there is one more round of testing to do, before coming to some final conclusions. Check back tomorrow for the final post in this series.