Rittman Mead at Collaborate 16: Data Integration Focus

It’s that time of year again when Oracle technologists from around the world gather in Las Vegas, Nevada, to teach, learn, and, of course, network with their peers. The Collaborate 16 conference, running for 10 years now, has been a collaboration, if you will, between the Independent Oracle Users Group (IOUG), Oracle Applications Users Group (OAUG), and Quest International Users Group (Quest), making it one of the largest user group conferences in the world. Rittman Mead will once again be in attendance, with two data integration focused presentations by me over the course of the week.

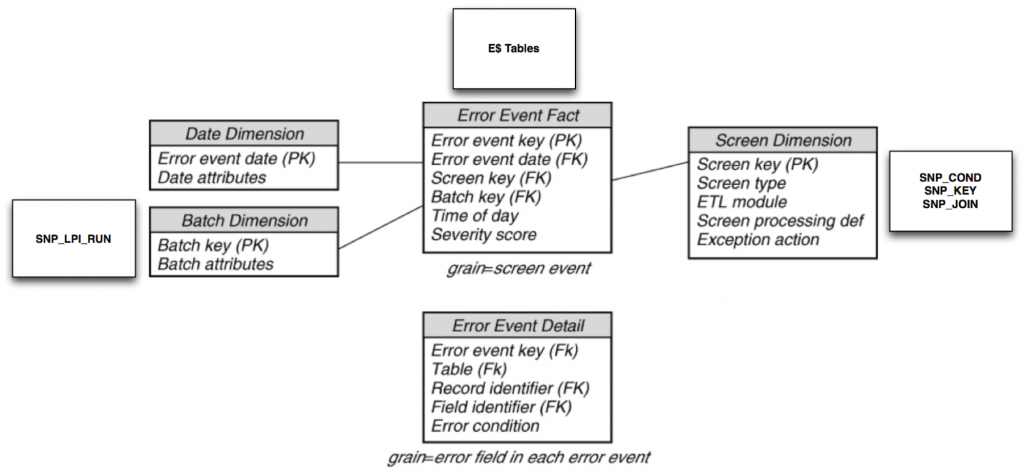

My first session at Collaborate 16, “A Walk Through the Kimball ETL Subsystems with Oracle Data Integration,” scheduled for Monday, April 11, at 10:30 a.m., will focus on how we can implement the ETL Subsystems using Oracle Data Integration solutions. As you know, Big Data integration has been the hot topic over the past few years, and it’s an excellent feature in the Oracle Data Integration product suite (Oracle Data Integrator, GoldenGate, & Enterprise Data Quality). But not all analytics require big data technologies, such as labor cost, revenue, or expense reporting. Ralph Kimball, dimensional modeling and data warehousing expert and founder of The Kimball Group, spent much of his career working to build an enterprise data warehouse methodology that can meet these reporting needs. His book, "The Data Warehouse ETL Toolkit," is a guide for many ETL developers. This session will walk you through his ETL Subsystem categories: Extracting, Cleaning & Conforming, Delivering, and Managing, describing how the Oracle Data Integration products are perfectly suited for the Kimball approach.

I go into further detail on one of the ETL Subsystems in an upcoming IOUG Select Journal article, titled "Implement an Error Event Schema with Oracle Data Integrator.” The Select Journal is a technical magazine published quarterly and available exclusively to IOUG members. My recent post Data Integration Tips: ODI 12c Repository Query – Find the Mapping Target Table shows a bit of the detail behind the research performed for the article.

If you’re not familiar with the Kimball approach to data warehousing, I definitely would recommend reading one (or more) of their published books on the subject. I would also recommend attending one of their training courses, but unfortunately for the data warehousing community, the Kimball Group has closed shop as of December 2015. But hey, the good news is that two of the former Kimball team members have joined forces at Decision Works, and they offer the exact same training they used to deliver under The Kimball Group name.

On Thursday, April 14, at 11 a.m., I will dive into the recently released Oracle GoldenGate for Big Data 12.2 in a session titled "Oracle GoldenGate and Apache Kafka: A Deep Dive into Real-Time Data Streaming.” The challenge for us as data integration professionals is to combine relational data with other non-structured, high volume and rapidly changing datasets, known in the industry as Big Data, and transform it into something useful. Not just that, but we must also do it in near real-time and using a big data target system such as Hadoop. The topic of this session, real-time data streaming, provides us a great solution for that challenging task. By combining GoldenGate, Oracle's premier data replication technology, and Apache Kafka, the latest open-source streaming and messaging system for big data, we can implement a fast, durable, and scalable solution.

If you plan to be at Collaborate 16 next week, feel free to drop me a line in the comments, via email at [email protected], or on Twitter @mRainey. I’d love to meet up and have a discussion around my presentation topics, data integration, or really anything we’re doing at Rittman Mead. Hope to see you all there!