Extreme BI: Capturing Change with Oracle GoldenGate and ODI : Part 1 (Introduction)

Before I jump into the first of two posts discussing change data capture with Oracle GoldenGate and Oracle Data Integrator 11g, I would like to first introduce myself. My name is Michael Rainey and I am the newest member of the Rittman Mead America team, joining just last month as an ODI consultant. I have been working with Oracle Data Integrator 11g since it was first released, and was part of a team at my former employer, Pacific Northwest National Laboratory, who developed an innovative solution for the rapid migration of SQL-based metadata to ODI Interfaces through use of the ODI SDK. I was able to present a brief overview of our solution at Oracle OpenWorld 2011 in a Customer Perspectives session. I plan on sharing some of my knowledge with you regarding data integration through this blog, and hopefully some future conference sessions. But, enough about me, on to the real topic of this post, Extreme BI: Capturing Change with Oracle GoldenGate and ODI.

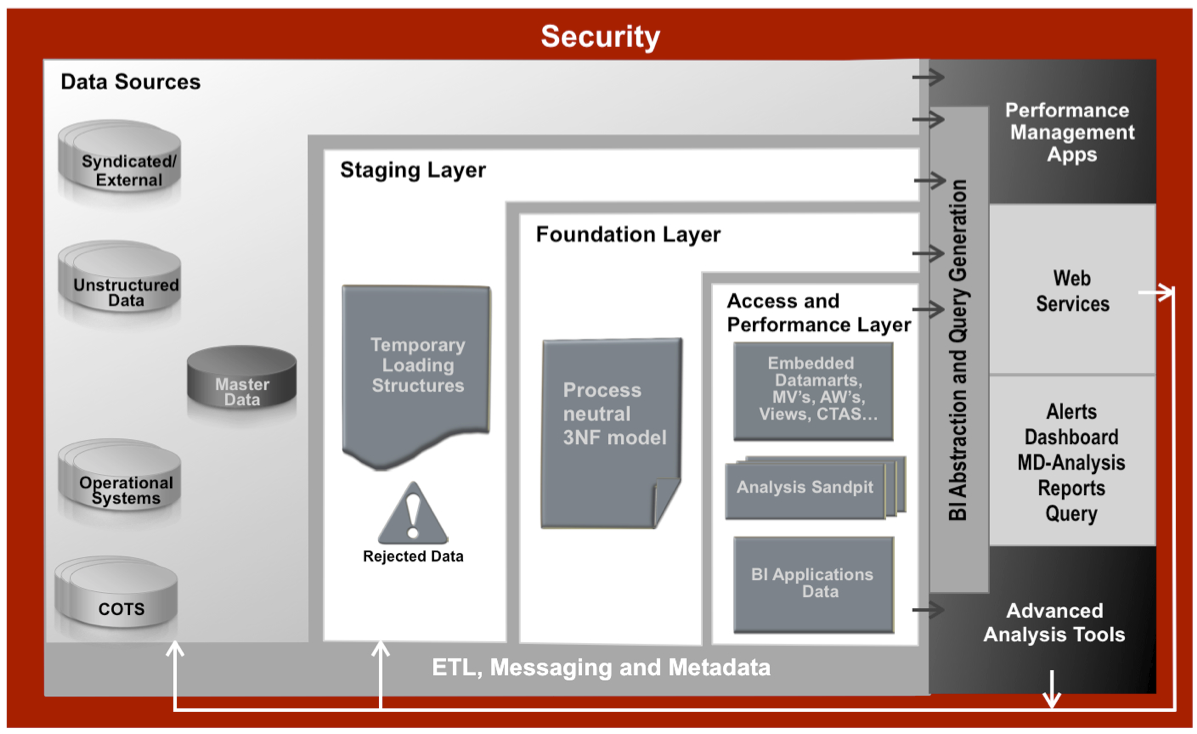

A few months back, Stewart Bryson described the need for a real-time data warehousing solution for loading the Foundation Layer with raw source data changes in a blog post titled Agile Data Warehousing with Exadata and OBIEE: Puzzle Pieces. As he described the importance of the Foundation Layer in delivering Extreme BI, he noted that Oracle GoldenGate, though a powerful and flexible replication technology, is lacking a simple setup for tracking source data changes in the data warehouse. He also noted that there may be a workaround available with some Oracle GoldenGate configuration and a little coding, and alluded to a potential future blog post (which you are now reading). What follows is one approach to solving this problem, using Oracle Data Integrator (ODI) 11g and Oracle GoldenGate (OGG).

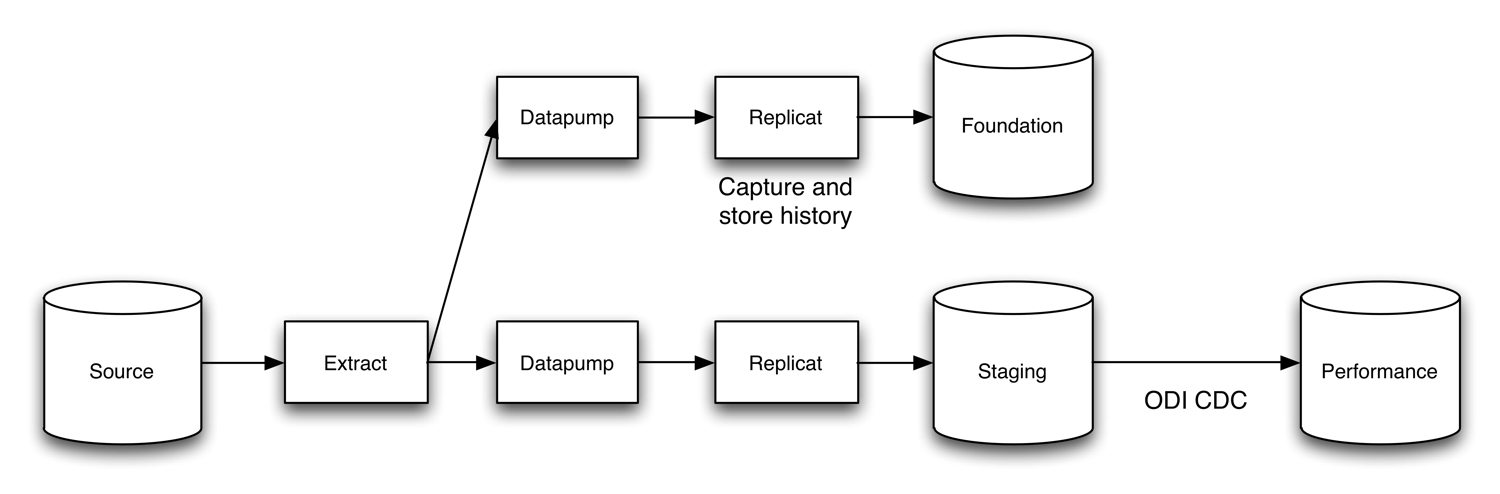

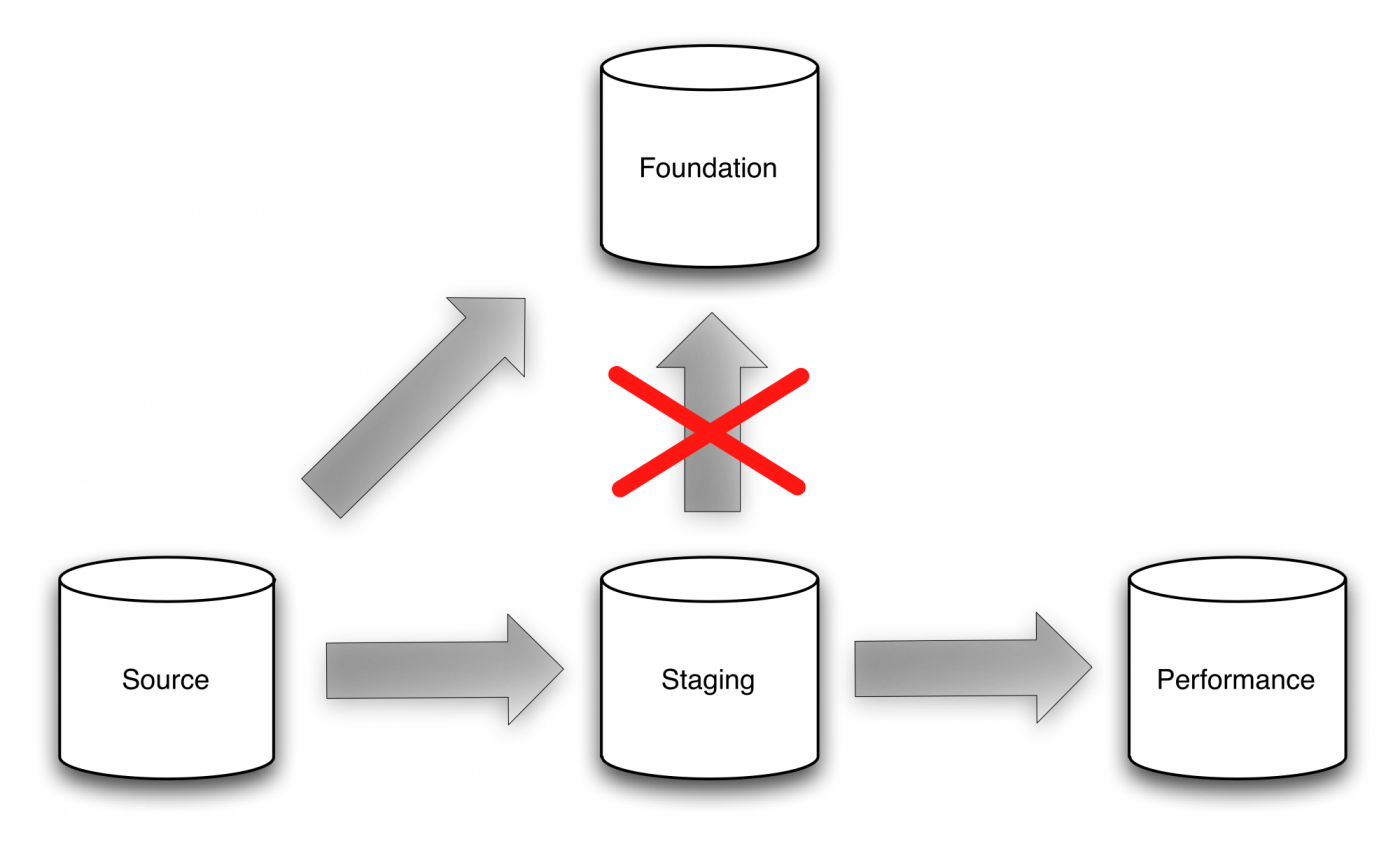

Our solution begins with Oracle GoldenGate and its real-time replication capabilities, integrating with Oracle Data Integrator 11g and its journalizing change data capture implementation. The setup will be as follows, with the replication from GoldenGate loading both Staging and Foundation layers, and then the ODI CDC moving data through Staging into the Performance layer. In this series of posts, we’ll focus on the replication piece, saving the Performance layer load for another time.