Diving Deeper into BI Apps 11.1.1.7.1 use of ODI11g for Data Loading

A few months ago I posted a series of articles on the new BI Apps 11.1.1.7.1 release, which used ODI11g in the background to perform data loads, rather than Informatica PowerCenter. Since then, I've collaborated with Accenture's Kevin McGinley on a "getting started" BI Apps 11.1.1.7.1 installation cookbook, where I went through the install and Kevin did the data load, with the aim being to provide readers with a "minimum viable install" OBIA 11.1.1.7.1 system. For me though, the most interesting part about this new release is how it embeds ODI11g into the platform infrastructure, and how element of ODI are used (and extended) to provide a proper, embedded ETL tool that Oracle can presumably take forward in a way that they couldn't with Informatica. In the next couple of blog posts then, I'm going to look at this ODI integration in a bit more detail, starting today with "lifting up the lid" on the configuration and data load process, to see just how the various BI Apps platform tools integrate with ODI and control how it works.

If you've worked with earlier, Informatica-based releases of the BI Apps, you'll be familiar with the many, many manual steps involved in configuring and integrating the various platform components, including two initial configuration phases that are particularly involved and prone to operator error:

- Linking all of the ETL, ETL control (DAC), database and other elements together, so that the DAC can run tasks that in turn, run Informatica Workflows that in turn, load data into the BI Apps data warehouse

- Preparing lots of CSV files to contain the various domain values, segment codes, flex field details and so on for your source systems, even though you know these same details are held in tables in EBS, for example.

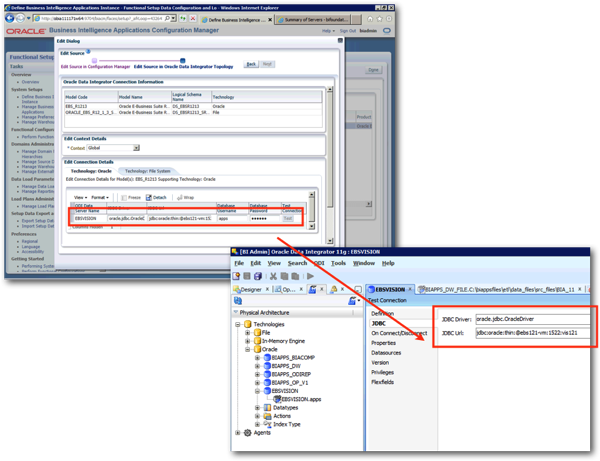

BI Apps 11.1.1.7.1 simplifies this setup process greatly, though, by automatically pushing all source and target configuration details entered into the web-based Configuration Manager application directly into ODI's repository. You can see this in action when defining the EBS data source in our cookbook example, where in the screenshot below connection details to the EBS database are entered into Configuration Manager, and then appear thereafter in ODI Studio's Topology navigator.

Configuration Manager stores copies of these settings in its own metadata tables, in this instance C_DATA_SERVER in a schema called DEV_BIACOMP, which can be thought of as similar to some of the DAC repository tables in BI Apps 7.9.x; in this instance though, Configuration Manager automatically pushes the values through to the ODI repository held in the DEV_BIA_ODIREPO schema, rather than you having to manually create the same entries in Informatica Workflow Manager yourself.

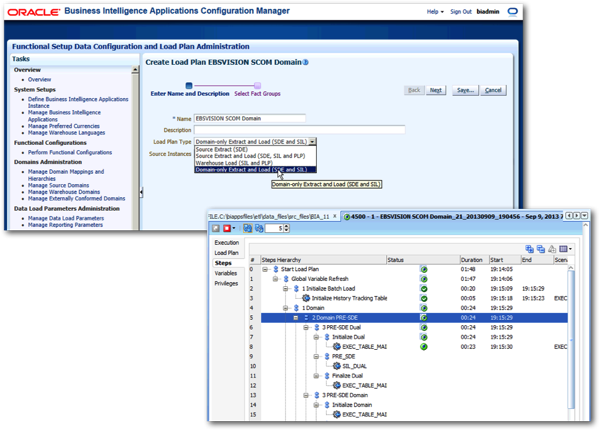

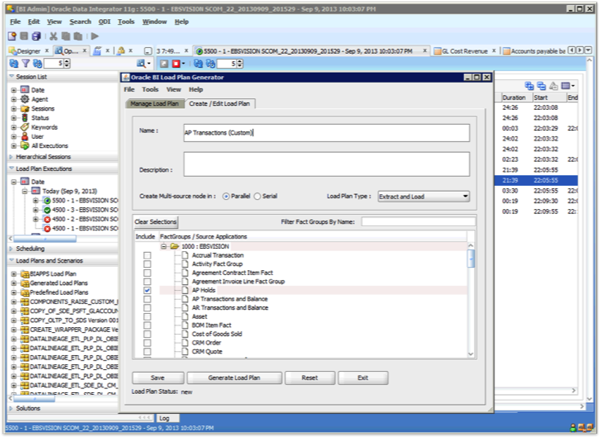

It's a similar story with the setting up of domain values - what happens with BI Apps 11.1.1.7.1 is that you configure a special type of load plan, analogous to BI Apps 7.9.6.4's execution plans, to read from the various EBS (in this instance) metadata table and set up the domain values automatically. In the screenshots below, the one on the left shows one of the special "domain extract only" load plans being set up for a particular set of fact table groups, while the screenshot on the right shows the same load plan being executed, loading domain values into the Configuration Manager metadata tables.

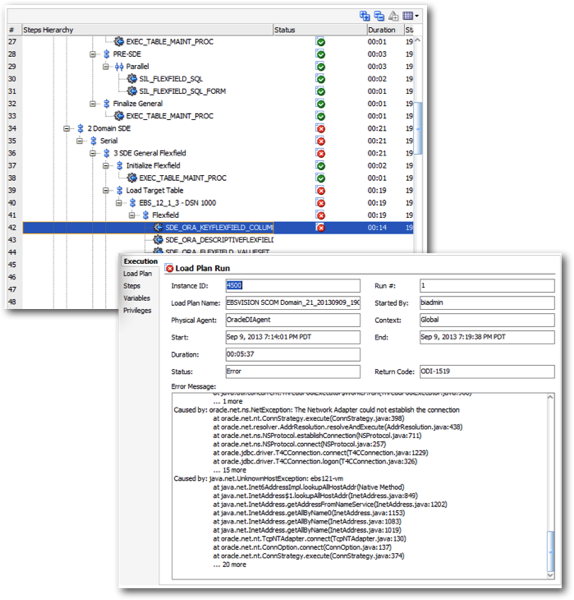

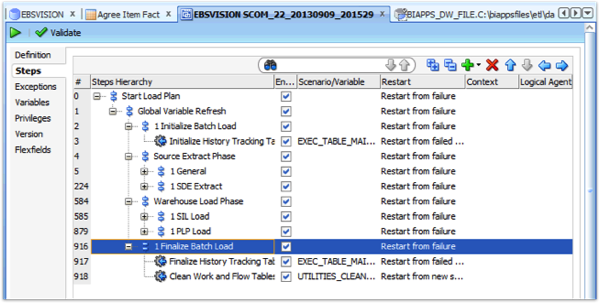

Notice also that what ODI11g is using to load data into these tables, is a standard ODI11g 11.1.1.5+ load plan, and it's presumably to support the BI Apps and their more complex loading routines and dependencies that load plans were introduced. And, because these are standard load plans, when they go wrong, as an ODI developer they're a lot easier to diagnose and debug than Informatica/DAC load routines, which left log files all over the place and used Informatica terminology for the load, rather than ODI's familiar ELT approach. In the screenshots below, this domain-load load process has failed at the point where data starts to get read from the EBS instance, and looking at ODI's error message view, you can quickly see that the error was caused by the EBS server being unreachable.

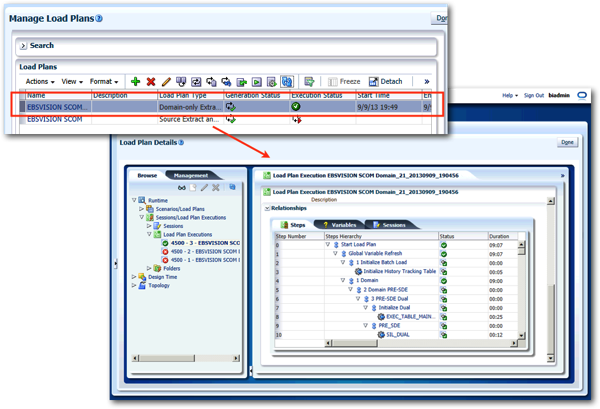

So far the screenshots of ODI's internals that you've seen are from ODI Studio, the Java thick-client developer tool that all ODI developers are familiar with. But the BI Apps tries to hide the details of the ETL tool from you, treating it as an embedded, "headless" server that ideally you administer as much as possible from Configuration Manager (for system-wide configuration) and Functional Setup Manager (for application-specific configuration). To achieve this, Configuration Manager can run ODI Console embedded within its web view, so its possible to click on a load plan, view its status and drill into the individual steps all from your web browser, no ODI Studio install needed.

So how do these load plans get generated, when there's no DAC and no historic way within ODI of dynamically-generating load plans based on metadata? What enables this dynamic creation of load plans is a new utility included with BI Apps 11g called "Load Plan Generator", which ships as a JEE library within the WebLogic domain and a plug-in to ODI Studio, for creating test load plans as part o the ETL customisation process.

This blog post by Oracle's Saurabh Verma describes the Load Plan Generator JEE back-end utility in a fair bit of detail, but in summary you can think of the load plan generation process as going like this:

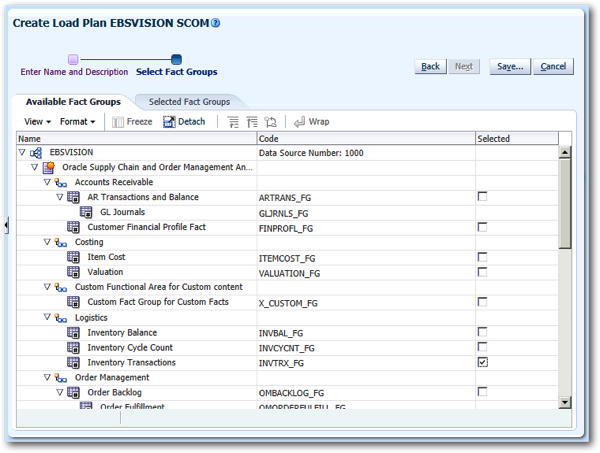

1. The ODI developer decides to create a new load plan, for loading data about inventories from EBS, for example. As shown in the screenshots before, domain values for this area within EBS will need to have been loaded prior to this, but assuming this is in place, the developer first selects one or more fact table groups, with each fact group a kind of "subject area" containing one or more data warehouse fact tables.

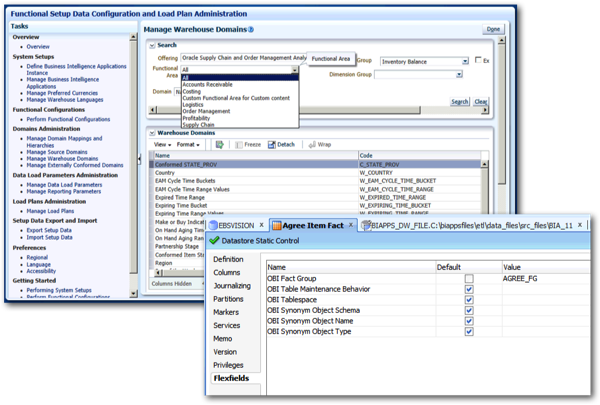

2. In the background, ODI doesn't have the concept of fact groups, but it instead uses flex field metadata for each fact table to specify which fact group each belongs to. You can see what's included in each fact group by looking at the warehouse domains view in Configuration Manager, and you can see the fact table flex fields in ODI Studio, when you view details of the table (or "model") in the Designer navigator.

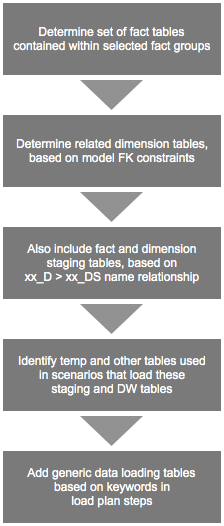

3. So when the BI Apps ODI developer tells Configuration Manager to create a new load plan, the steps it goes through and metadata it consults looks like this:

4. Giving you, in the end, a load plan with a standard set of phases, and optimally-selected and orchestrated load plan steps to load data into the required fact groups.

5. Then, once the load plan runs, you've got the same in-built restartability capabilities that you get in all other load plans, together with the concept of load plan instances, exceptions and so forth, so again as an ODI developer all of this is fairly obvious and fairly transparent to debug.

In the background, WebLogic Server hosts the ODI agent within an ODI-specific managed server and within the overall BI Apps WebLogic domain, with ODI's security linked to WebLogic so that the same developer and operator IDs work across the whole system, and with a set of additional Fusion Middleware Security application roles for the various levels of administrative access.

So - you could say that BI Apps 11.1.1.7.1 is a "developer's dream" for ODI professionals, as it brings the productivity and source system compatibility benefits of the BI Apps to the familiar, more efficient world of ODI. Everything is connected, and you can see where everything is stored in the various underlying repository tables. But - and this is probably the most obvious next thought from experienced BI Apps developers - how do you go about customising these ETL routines, adding new data sources, and upgrading the system over time? And where does the new integration with GoldenGate come in, giving us the ability to break-out of restrictive load windows and potentially move BI Apps into the cloud - watch this space for more details.