Going Beyond the Summary Advisor with TimesTen for Exalytics

I’m over in Seattle at the moment for ODTUG KScope’14, and one of the topics I’m presenting on this week is the use of TimesTen with OBIEE; what I’m going to talk about is taking TimesTen beyond its current use as an in-memory cache for aggregates created by the Summary Advisor, and instead using it to store additional custom aggregates, “hot” data from the source data warehouse, and other custom structures created using SQL*Developer and potentially loaded using Oracle Data Integrator. The point of the session is to show one of the main benefits of TimesTen even in the world of the new In-Memory Option for Oracle Database - it’s a flexible but Oracle-compatible database that’s typically under the control of the BI team and open to much more customisation than most customers realise.

To illustrate the point, I’m going to run through an example using the Oracle Database Sales History (SH) schema as the source in this article, with the example going through five stages of development:

-

First, I’ll load TimesTen for Exalytics in the usual way, by having the Summary Advisor run some recommendations and then generate aggregates to load into TimesTen.

-

Then, I’ll add some of my own aggregates into TimesTen, by using the Aggregate Persistence Wizard, so that we’ve now got a base set of aggregates from me (ones that I know we’ll need) plus whatever the Summary Advisor recommends based on historic query patterns.

-

Next, I’ll use TimesTen to hold what we refer to as “hot data” - the actual transactional data from the source database in this case from the last six months. So now, TimesTen will contain both the full transaction-level data that users are most likely to query at detail-level, plus aggregations of the full dataset over time, giving us even more chance that TimesTen will be able to fulfil a user’s query.

-

Then I’ll supplement this data in TimesTen with some additional reference data, that I’ll bring in from file using a tool like Oracle Data Integrator or the Oracle Database external table feature, modifying the existing TimesTen table structure using SQL*Developer to hold these additional dimension attributes.

-

Finally, I’ll set up incremental refresh of all of this, in order to avoid the full table-drop-and-reload approach that the Summary Advisor and Aggregate Persistence Wizard use, and to make data refreshing more efficient

Let’s start with steps 1 and 2, creating the aggregate layer for the underlying Sales History Oracle data source.

1. Creating Initial Aggregate using the Summary Advisor

We can use OBIEE’s Summary Advisor, and the underlying Aggregate Persistence mechanism that the Summary Advisor uses, to build the in-memory aggregates for our underlying datasource. Starting with the simplest part first, we’ll use the Summary Advisor to create a set of aggregates based off of recommendations from historic query data.

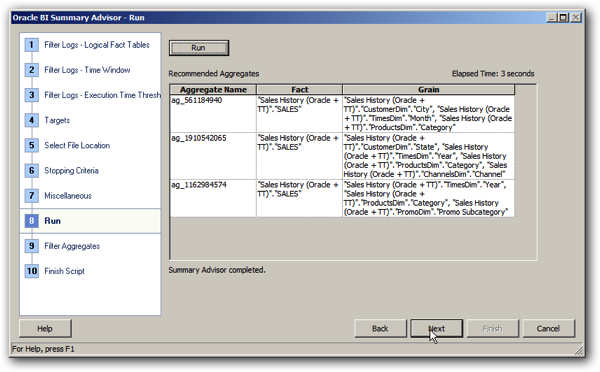

Running the Summary Advisor in my case brings up a recommendation to create three aggregates:

Running the script that the Summary Advisor generates brings up one of the issues though that you’re likely to hit when using this tool - your RPD has to be absolutely “rock solid” in terms of correctness, otherwise your aggregate build will fail. In the case of this example, the summary advisor aggregate persistence script starts-off running OK, but then errors at the end when one of the aggregate tables fails to build:

c:\Middleware\Oracle_BI1\bifoundation\server\bin>nqcmd -d coreapplication_OH799

36239 -u weblogic -p welcome1 -s c:\ttscripts\summ_adv_SH_full_blog.sql

-------------------------------------------------------------------------------

Oracle BI ODBC Client

Copyright (c) 1997-2013 Oracle Corporation, All rights reserved

-------------------------------------------------------------------------------

create aggregates

"ag_561184940"

for "Sales History (Oracle + TT)"."SALES"("AMOUNT_SOLD","QUANTITY_SOLD")

at levels ("Sales History (Oracle + TT)"."CustomerDim"."City", "Sales History

Oracle + TT)"."TimesDim"."Month", "Sales History (Oracle + TT)"."ProductsDim"."Category")

using connection pool "tt_aggr_store"."Connection Pool"

in "tt_aggr_store".."EXALYTICS",

…

"ag_1162984574"

for "Sales History (Oracle + TT)"."SALES"("AMOUNT_SOLD","QUANTITY_SOLD")

at levels ("Sales History (Oracle + TT)"."TimesDim"."Year", "Sales History (Or

cle + TT)"."ProductsDim"."Category", "Sales History (Oracle + TT)"."PromoDim"."Promo Subcategory")

using connection pool "tt_aggr_store"."Connection Pool"

in "tt_aggr_store".."EXALYTICS"

[10058][State: S1000] [NQODBC] [SQL_STATE: S1000] [nQSError: 10058] A general error has occurred.

Statement preparation failed

Processed: 1 queries

Encountered 1 errors

Looking at the list of tables left in TimesTen at that point, I can see that one of the dimensions failed to build, which then cascading down to the dependent fact table build failing:

C:\Users\Administrator>ttisql Copyright (c) 1996, 2013, Oracle and/or its affiliates. All rights reserved. Type ? or "help" for help, type "exit" to quit ttIsql. Command> connect "DSN=tt_exalytics_3;uid=exalytics;pwd=welcome1"; Connection successful: DSN=tt_exalytics_3;UID=exalytics;DataStore=C:\TimesTen\tt_data_3;DatabaseCharacterSet=AL32UTF8;ConnectionCharacterSet=US7ASCII;DRIVER=C:\TimesTen\TT1122~2\bin\ttdv1122.dll;LogDir=C:\TimesTen\tt_data_3;PermSize=4000;TempSize=2000;LockWait=60010.0;SQLQueryTimeout=60000;TypeMode=0;QueryThreshold=60000;PLSCOPE_SETTINGS=IDENTIFIERS:NONE;RangeIndexType=1;(Default setting AutoCommit=1) Command> tables; EXALYTICS.SA_CATEGOR0000AFE8 EXALYTICS.SA_CHANNEL0000B8F9 EXALYTICS.SA_CITY0000AC6A EXALYTICS.SA_MONTH0000AC81 EXALYTICS.SA_PROMO_S0000B8E8 EXALYTICS.TEST 6 tables found.

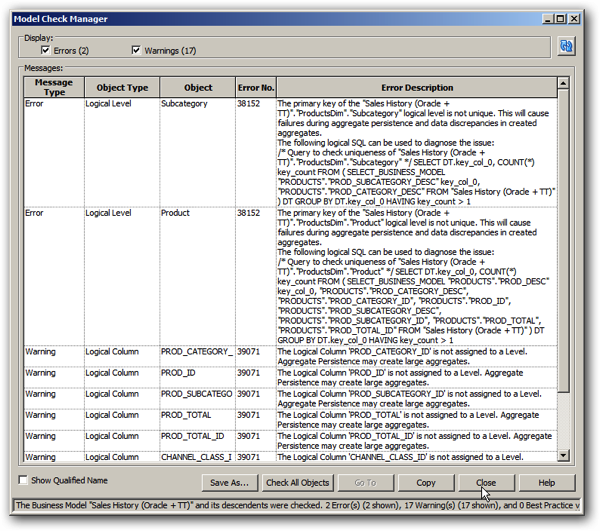

Summary Advisor aggregates failing to build is something that regular Exalytics developers will be used to, at least from when they first use the tool. The trick to it is to make sure you run the Model Checker, within the BI Administration Tool, before you try and generate some aggregates; whilst your RPD might pass the regular consistency check and be valid as a repository that can go online and be used for reporting, it may not be consistent or logically correct from an aggregation standpoint, and looking at the NQQuery.log file, I can see that the first aggregate failed to build because aggregate persistence rejected the shared product dimension all of the aggregate fact tables used.

Running the model checker on my business model, I can see there are two errors listed for the product dimension, and a bunch of warnings where I’ve not added dimension attribute columns to their relevant dimension level.

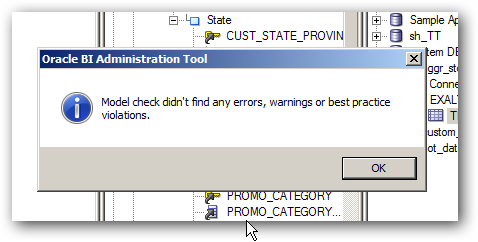

Running the logical SQL given in the two error messages at the start, I can see that there’s an error in the data that I’m using for my Products dimension data source, in that one or more of the dimension levels contains keys that are duplicates - in this case because there are two “Accessories” product subcategories with different IDs. I go into SQL*Developer and correct this issue, and also add the logical columns listed as “warnings” into their respective logical dimension levels, so that running the model checker again gives me this message instead:

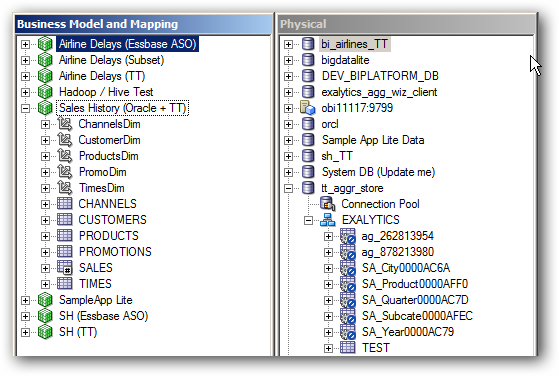

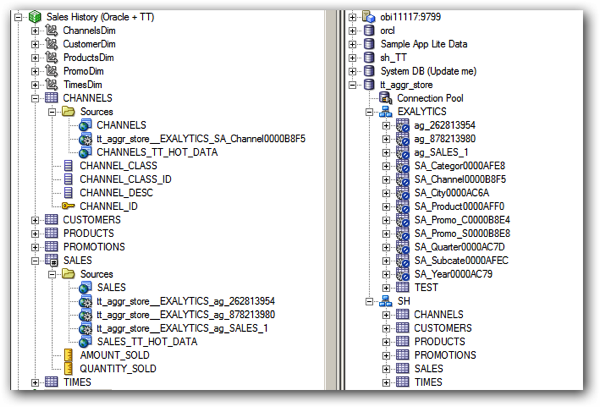

I then pick two of the aggregate recommendations and run the script for them, so in the end I’m left with the aggregate tables below in my RPD.

Thereafter, I can run the Summary Advisor again to generate some more recommendations, or I can add my own specific aggregates into the TimesTen database using the Aggregate Persistence Wizard, for example to add an aggregate I know users are going to want to use.

2. Adding Custom Aggregates using the Aggregate Persistence Wizard

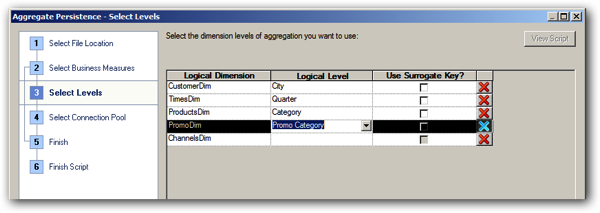

Generating aggregates using the Aggregate Persistence Wizard uses the same underlying mechanism to create and populate the aggregate tables, but in this instance I select the specific measures, dimensions and hierarchy levels I want to aggregate by. In this instance, I use the Aggregate Persistence Wizard to create an additional aggregate using the Promotions dimension, as I know some new reports coming along will make use of this pre-aggregation.

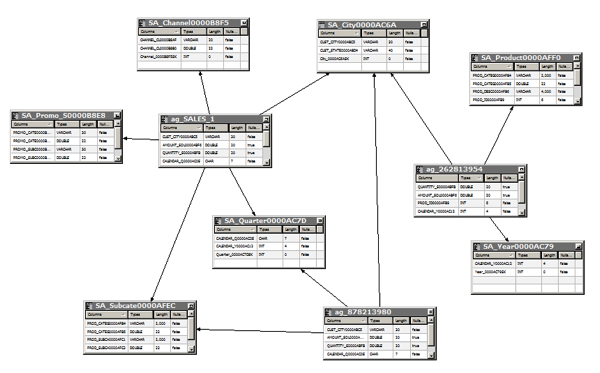

So where I am at the end of all this is with a TimesTen aggregate data mart containing three aggregate fact tables - two recommended by the Summary Advisor, one I added myself - along with supporting dimension tables.

And this is great for handling dashboard queries that request summarised data, through a graph or pivot table analysis. But what about when users want to drill-down to the detail, or run reports against recent transactional activity? That’s where the second TimesTen use-case, “hot data”, comes in.

3. Populating TimesTen with Transactional “Hot Data”

So far we’ve looked at populating our TimesTen database with aggregate data, but what about making the most recent set of transactions available to users also in TimesTen, so that analyses running against recent activity even at the detail level run fast too? To do this we can use the ttImportFromOracle utility to replicate into TimesTen the Sales History schema, and then edit the data loading script it generates to only load the last six months of data.

This utility only works for Oracle sources, and ships with recent versions of TimesTen in the /support directory. Using it I can have the utility scan the data in my source database in order to recommend the most space-efficient TimesTen datatypes, and I can also use it to recommend compression settings for use with TimesTen for Exalytics Columnar Compression feature.

As ttImportFromOracle requires you to have a TimesTen schema with the same name as the one you’re importing from in Oracle, I create a new schema in my TimesTen for Exalytics database, ready for importing from the Oracle source:

create user sh identified by welcome1; grant create session to sh; grant create table to sh; grant select on SYS.OBJ$ to sh; grant admin to sh;

Then I can use the utility to create the DDL and data loading scripts that I’ll then use to import the hot data from Oracle.

c:\TimesTen\tt1122_64_3\support>ttImportFromOracle.exe -oraconn sh/password@orcl -tables CUSTOMERS PRODUCTS SALES CHANNELS PROMOTIONS TIMES -typeMap 2,1 -compression 1 Beginning processing Resolving any tablename wildcards Eliminating any duplicate tables Getting metadata from source Generating database user list Assigning TimesTen datatypes Analyzing source tables (this may take some time) Analyzing table 'SH.CUSTOMERS' ... Analyzing table 'SH.PRODUCTS' ... Analyzing table 'SH.SALES' ... Analyzing table 'SH.CHANNELS' ... Analyzing table 'SH.PROMOTIONS' ... Analyzing table 'SH.TIMES' ... Optimizing TimesTen datatypes Estimating compression ratios Generating output files Finished processing

This set of scripts creates the tables and indexes to hold the replicated data from Oracle, and I can edit the “LoadData.sql” script that comes with the DDL scripts to load just the last six months of data - to do this I split the “ttLoadFromOracle” TimesTen procedure calls in this script into ones for the dimensions and one for the fact table load, which after amending it to load just six months data looks like this:

timing 1;

call ttLoadFromOracle('SH', 'SALES', 'SELECT S.PROD_ID, S.CUST_ID, S.TIME_ID, S.CHANNEL_ID, S.PROMO_ID, S.QUANTITY_SOLD, S.AMOUNT_SOLD

FROM SH.SALES S, SH.TIMES T

WHERE S.TIME_ID = T.TIME_ID

AND T.CALENDAR_MONTH_DESC > ''2001-06''');

Then I connect to TimesTen as the SH user, providing the password to the Oracle database as part of the connect string, and then run the scripts I’ve just generated:

Command> connect "DSN=tt_exalytics_3;uid=sh;password=welcome1;oraclepwd=password"; Command> run c:\ttimportscripts\CreateTables.sql Command> run c:\ttimportscripts\LoadData_dims.sql Command> run c:\ttimportscripts\LoadData_fact.sql Command> run c:\ttimportscripts\CreateIndexes.sql Command> run c:\ttimportscripts\UpdateStats.sql

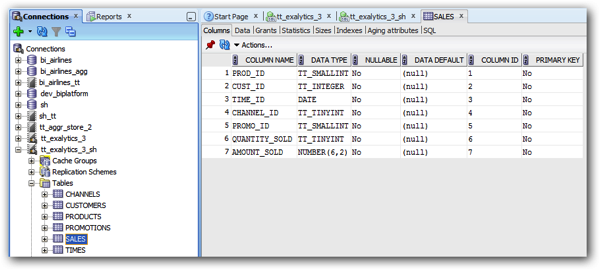

I can then go over to SQL*Developer and see the new TimesTen tables there, with the optimised datatypes provided by ttImportFromOracle:

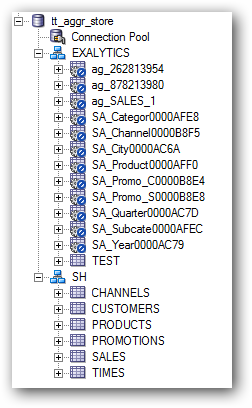

and also import these new tables into the RPD, adding an extra schema alongside the aggregate tables:

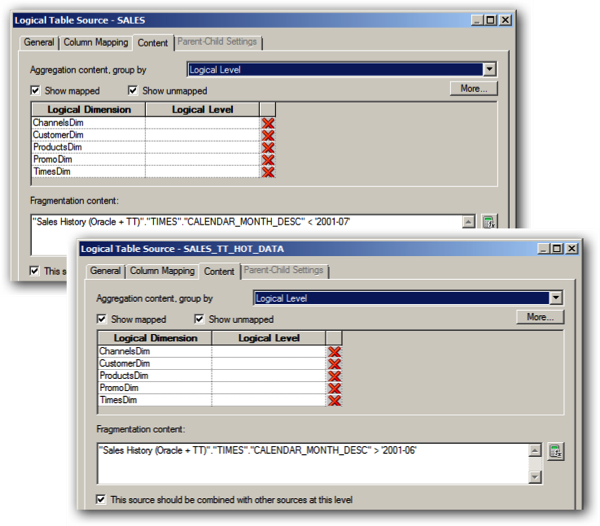

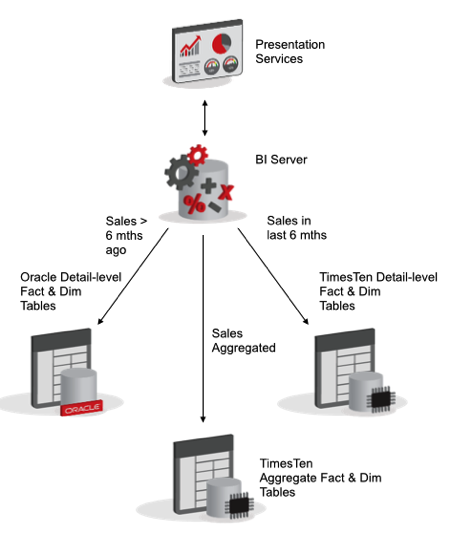

Then, the way to make use of this six months of transactional data in TimesTen is to set up “fragmentation” in the OBIEE RPD, so that the BI Server goes to the TimesTen data source when queries require data from the past six months, and the standard Oracle datasource if data further back from that is required; in addition, because of the aggregates we’ve also set up, it’ll go to the TimesTen aggregate tables if queries request aggregated data, so TimesTen performs the role of holding both “hot” data, and aggregate data.

The first step in setting this up is to map in the new TimesTen table as additional logical table sources into the existing business model. In the screenshot below, you can see the new TimesTen logical table sources added to the existing Oracle, and TimesTen aggregate logical table sources, and when you do this make sure you remove any additional logical table keys that might come across when you map in the new TimesTen tables.

Then, for just the fact table within the logical iodel, edit the Oracle detail-level and the TimesTen “hot data” detail level table sources, add fragmentation conditions to define what time period each source covers, like this:

Do this just for the fact table logical table source; then, when a query comes through to the BI Server, if it needs to refer to the TimesTen logical table source it’ll join-out to the relevant TimesTen hot data logical table sources to get the rest of the required data, and when it needs to go to the Oracle fact table logical table source, it’ll join-out to the Oracle LTSs, as shown in the diagram below.

Then, when you incude the TimesTen aggregate tables into the architecture as well, you’ve got three potential sources of data for user reports, all handled seamlessly and automatically by the BI Server; the Oracle and TimesTen “hot” data sources for detail-level reporting, one or the other (or both) used for queries at the transaction level, and the TimesTen aggregate tables when they would answer a query faster than rolling-up the detail-level sources on-the-fly.

4. Supplementing with Additional Reference Data

One of the advantages of using a mid-tier database like TimesTen, that’s under the control of the BI department, is that you can be a lot more “agile” in terms of bringing in new data sources, than is often the case when you’re reporting against a data warehouse that’s strictly governed and a shared corporate resource. TimesTen lends itself well to agile development too, in that you can work with it using tools like SQL*Developer and easily load data into it using ETL tools such as Oracle Data Integrator, or leverage the connectivity features in the Oracle Database and then use ttImportFromOracle.

Working with TimesTen database structures is more or less the same process as working with Oracle ones, but there’s a couple of differences you need to be aware of when developing your TimesTen tables. Adding new columns to a table is possible, but these can end up stored “out of line” with the main column set and can cause performance issues when queried, or compatibility issues when loading using tools like ttImportfFromOracle. If you’re using TimesTen to store “hot” data, make sure you’ve got corresponding tables for this reference data in the source Oracle database, and make sure you select the most appropriate TimesTen datatypes for your new data (for example, TT_TINYINT and TT_INTEGER) rather than just the default Oracle-like datatypes.

Most importantly, for all of your TimesTen tables, make sure you run the Index Advisor after you start to run user reports in order to ensure you’re using the right indexes for your particular query patterns, and make sure you gather stats on your TimesTen tables as well as the Oracle ones, so that the TimeTen query optimiser can generate the most efficient query plans.

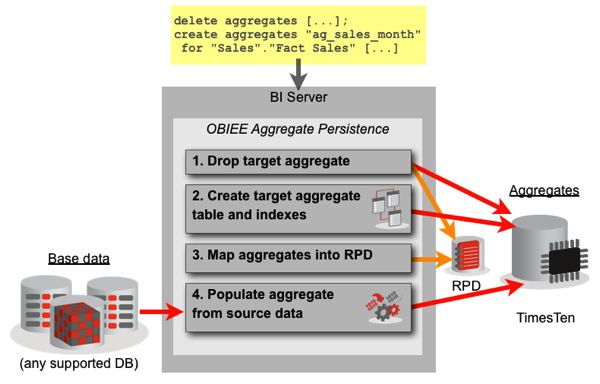

5. Setting up Incremental Refresh

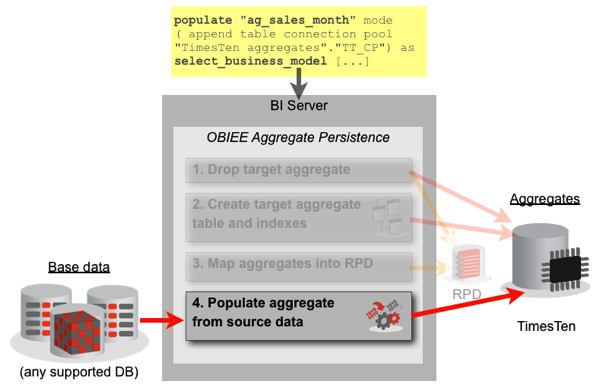

The last thing we want to set up for our TimesTen analytic data mart is some way to incrementally refresh the aggregates we built using the Summary Advisor and Aggregate Persistence Wizard. The way these tools work is that they drop, and then recreate the aggregate tables each time you load them, and add and remove them from the RPD at the start and end of the data load, as shown in the diagram below:

What you can do, though, as outlined in this earlier blog post by Robin Moffatt, is take the POPULATE BI Server command that you’ll find in the nqquery.log file after an aggregate build, and use it to just refresh the aggregates in-place, without dropping the tables beforehand or removing them from the RPD, as shown in the diagram below.

Looking through the nqquery.log file I can see various POPULATE commands for the TimesTen dimension tables, like this:

populate "SA_Promo_C0000B8E4" mode ( append table connection pool "tt_aggr_store"."Connection Pool") as select_business_model "Sales History (Oracle + TT)"."PROMOTIONS"."PROMO_CATEGORY" as "PROMO_CATE0000B8C6","Sales History (Oracle + TT)"."PROMOTIONS"."PROMO_CATEGORY_ID" as "PROMO_CATE0000B8C7", RCOUNT (1) as "Promo_C_0000B8E4SK" from "Sales History (Oracle + TT)"; [[

If I then couple that with the INACTIVE_SCHEMAS variable being set to the TimesTen physical data source, add a command to truncate the dimension table before the load, and then alter the fact table POPULATE command’s SELECT statement to only load in just this past month’s data, deleting that data down from the target fact table beforehand, I’ve got a full incremental refresh I can run from a cron job or other scheduler. The script excerpt below shows such an example, with just a few of the dimensions being reloaded.

For the “hot data” tables it’s a similar case of taking the existing LoadData.sql and either modifying it to load in just the new transactions, or reload all six months if it’s not easier to do just that.

execute physical connection pool "tt_aggr_store"."Connection Pool" truncate table SA_Categor0000AFE8;

SET VARIABLE INACTIVE_SCHEMAS='"tt_aggr_store".."EXALYTICS_AGG_WIZ"':

populate "SA_Categor0000AFE8" mode ( append table connection pool "tt_aggr_store"."Connection Pool") as select_business_model "SH (Oracle)"."PRODUCTS"."PROD_CATEGORY_DESC" as "PROD_CATEG0000AFB4", RCOUNT (1) as "Categor_0000AFE8SK" from "SH (Oracle)";

execute physical connection pool "tt_aggr_store"."Connection Pool" truncate table SA_Month0000AC81;

SET VARIABLE INACTIVE_SCHEMAS='"tt_aggr_store"..”EXALYTICS"':

populate "SA_Month0000AC81" mode ( append table connection pool "tt_aggr_store"."Connection Pool") as select_business_model "SH (Oracle)"."TIMES"."CALENDAR_MONTH_DESC" as "CALENDAR_M0000AC0A","SH (Oracle)"."TIMES"."CALENDAR_QUARTER_DESC" as "CALENDAR_Q0000AC0E","SH (Oracle)"."TIMES"."CALENDAR_YEAR" as "CALENDAR_Y0000AC12", RCOUNT (1) as "Month_0000AC81SK" from "SH (Oracle)";

execute physical connection pool "tt_aggr_store"."Connection Pool" truncate table SA_City0000AC6A;

SET VARIABLE INACTIVE_SCHEMAS='"tt_aggr_store".."EXALYTICS"':

populate "SA_City0000AC6A" mode ( append table connection pool "tt_aggr_store"."Connection Pool") as select_business_model "SH (Oracle)"."CUSTOMERS"."CUST_CITY" as "CUST_CITY0000ABC5","SH (Oracle)"."CUSTOMERS"."CUST_STATE_PROVINCE" as "CUST_STATE0000ABD4", RCOUNT (1) as "City_0000AC6ASK" from "SH (Oracle)";

execute physical connection pool "tt_aggr_store"."Connection Pool" delete from ag_SALES where CALENDAR_M0000AC0A = '2001-12';

SET VARIABLE INACTIVE_SCHEMAS='"tt_aggr_store".."EXALYTICS"':

populate "ag_SALES" mode ( append table connection pool "tt_aggr_store"."Connection Pool") as

select_business_model "SH (Oracle)"."CUSTOMERS"."CUST_CITY" as "CUST_CITY0000ABC5",

"SH (Oracle)"."SALES"."AMOUNT_SOLD" as "AMOUNT_SOL0000ABF6",

"SH (Oracle)"."SALES"."QUANTITY_SOLD" as "QUANTITY_S0000ABFB",

"SH (Oracle)"."TIMES"."CALENDAR_MONTH_DESC" as "CALENDAR_M0000AC0A",

"SH (Oracle)"."PRODUCTS"."PROD_CATEGORY_DESC" as "PROD_CATEG0000AFB4"

from "SH (Oracle)"

where "SH (Oracle)"."TIMES"."CALENDAR_MONTH_DESC" = '2001-12';

Conclusion

So what we’ve got here then is a much-expanded use of TimesTen in the context of Exalytics and OBIEE; we’ve not only used it as an in-memory cache for Summary Advisor aggregates, but we’ve used the same underlying mechanism to create other aggregates that we know will be useful for future queries.

We’ve also made use of the 1-2TB of RAM on the Exalytics server to also cache the last six months of detail-level transactional data, making it even more likely that TimesTen will be able to answer all of the users’ queries.

Once you’ve got your data in TimesTen, you’ve got access to the same full set of analysis functions that are available when reporting against regular Oracle databases, with TimesTen for Exalytics giving you more native aggregation and analysis functions compared to standard TimesTen, and the BI Server “functionally compensating” for anything not natively available in TimesTen by performing the calculation itself, using the raw data provided by TimesTen

Finally, we’ve talked about how we can load in additional datasets into TimesTen via ODI or ttImportFromOracle, potentially using the latter in conjunction with external tables to bring in file data, and then looked at what’s involved in trickle-feeding the TimesTen cache rather than dropping and reloading it each time.

If you’re at KScope’14 in Seattle this week, I’m presenting on this topic on the Wednesday; if not, and you’re potentially interested in us helping you get more out of your TimesTen for Exalytics install, just drop me at line at mark.rittman@rittmanmead.com.