Oracle Data Integrator to load Oracle BICS and Oracle Storage Cloud Service

It is known that Oracle focuses its sales on cloud this year and in the BI world, we have seen the introduction of Oracle Business Intelligence Cloud Service – BICS – that we already covered on the blog. Another great product to store files and unstructured data is Oracle Storage Cloud Service, that can also be used as a staging area before loading a BICS schema. To provision our BI system in the cloud or to backup our files, it's important to find a reliable and maintainable way to move on-premise data to the cloud. And when we speak about reliable and maintainable way to move data, I can't help but think about Oracle Data Integrator. Luckily, Ayush Ganeriwal wrote two excellent blog posts explaining how to use ODI to load these two cloud services! And he even gave away the Knowledge Modules, ODI Open Tools, Jar files and other things he developed to make it work.

Load data to Oracle Storage Cloud Service with a package.

Oracle Storage Cloud Service is used to store files and unstructured data in the cloud, very much like Amazon S3. In the case of a company that has moved to the cloud, they need some space to store their files that will be used by other cloud services. Oracle Storage Cloud Service is the place to store all these files. If you want to use it as a cloud backup of your local filesystem, you might even choose Oracle Storage Cloud Archive Service which has cheaper storage but cost more when retrieving or editing data, similarly to Amazon Glacier .

Oracle Storage Cloud Service offers a RESTful web service API to load data as well as a Java Library directly wrapping this API and supporting client-side encryption. When it comes to load data, what could be better than using the tool we already use for all the rest? In this blog post , Ayush details how he created an ODI OpenTool that can load data to the Oracle Storage Cloud Service using the Java Library.

We first need to download the Java Library and place it in our ODI Studio or Agent classpaths. Below are the default locations for these classpaths, but we can also specify additional classpath if we want. Don't forget to close ODI Studio and shutdown the agent(s) before adding the files.

ODI Studio on Unix : ~/.odi/oracledi/userlib ODI Studio on Windows : %APPDATA%Roamingodioraclediuserlib 12c Standalone Agent : <ODI_AGENT_HOME>/odi/agent/lib 11g Standalone Agent : <ODI_AGENT_HOME>/oracledi/agent/drivers/

For a JEE agent, we need to create a server template as described in the doc : https://docs.oracle.com/middleware/1212/odi/ODIDG/setup_topology.htm#CHDHGIGI.

In the same folder, we can also drop the Jar file for the Open Tool that Ayush created and posted on java.net (last link in the folder at the moment of writing). Remember that all the drivers, libraries and Open Tools have to be present in every ODI Studio or agent install.

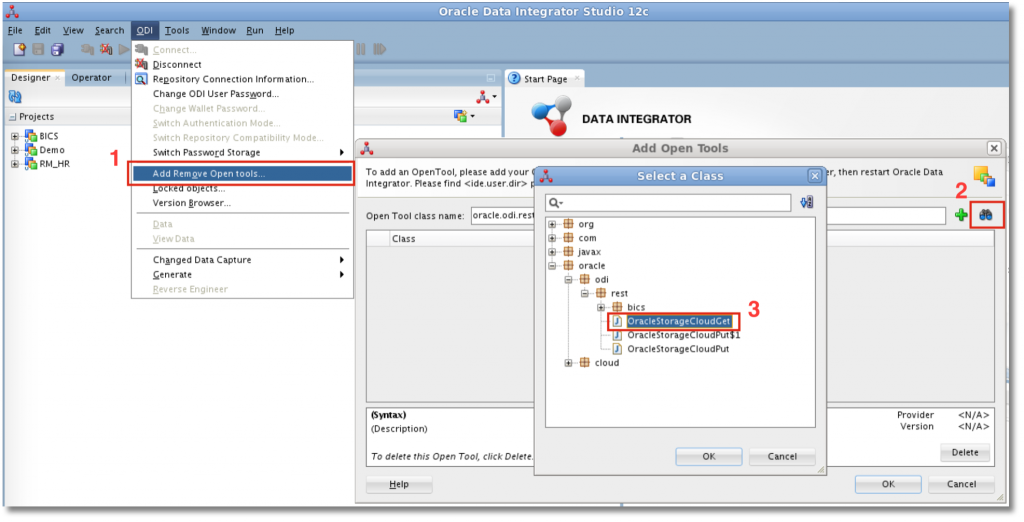

We can now reopen ODI Studio as we need to register the new Open Tool in the master repository so everyone can use it. In the ODI Menu, click on Add Remove Open Tools and then on the googles icon to search for the Open Tools. In our case, the class is under the package oracle.odi.rest.

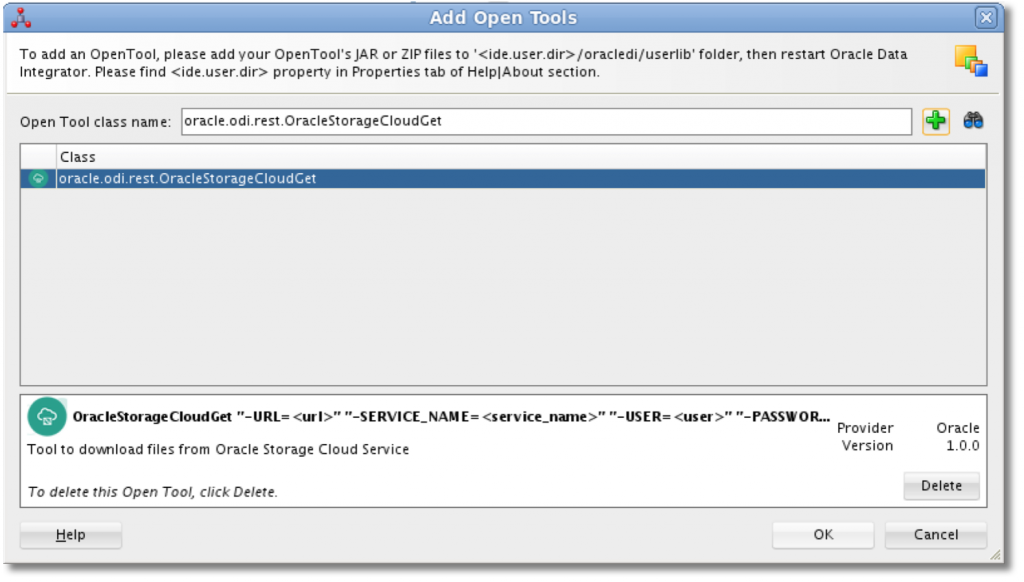

Select the OracleStorageCloudGet tool and click the Plus icon to add it. There we can see the description of the tool, the command, the parameters and the icon used. Let's add the OracleStorageCloudPut as well and click OK.

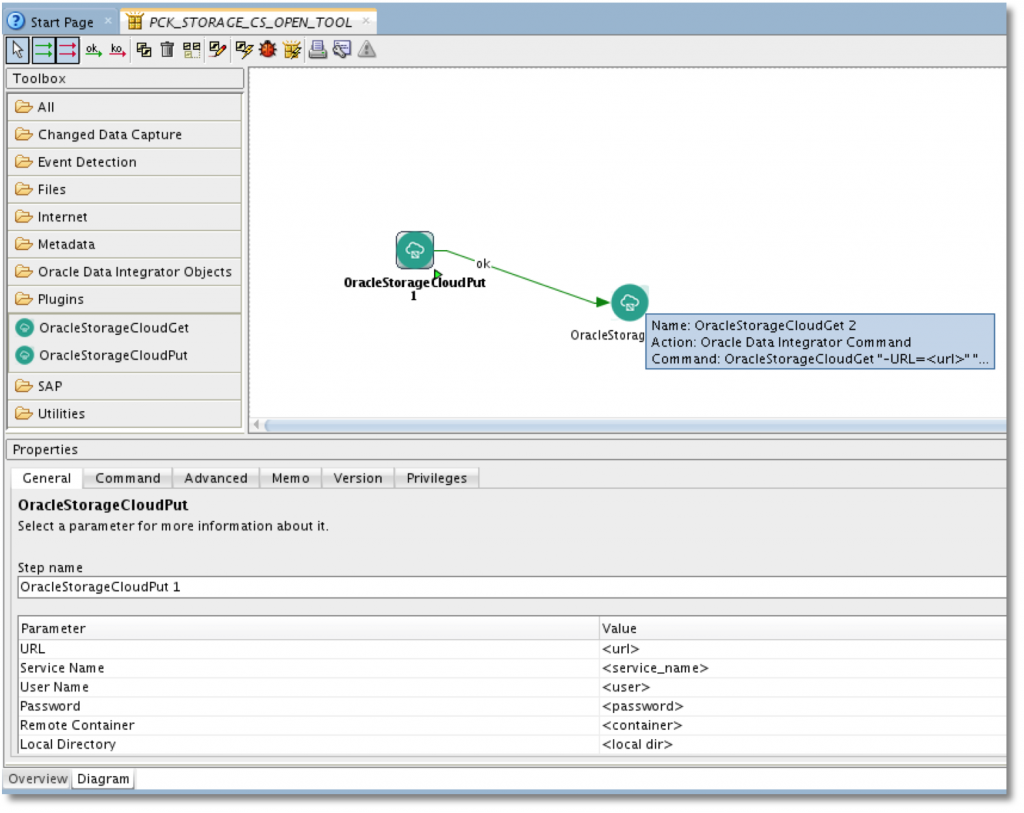

That's it! We can now see two new ODI Open Tools under the Plugins category in the Package Toolbox. We can add it to the package and fill all the parameters or copy/paste the command to use it in an ODI Procedure.

Load data to Oracle Business Intelligence Cloud Service with a mapping.

BICS offers a Data Loader which is actually an Apex application taking Excel, CSV, text or zip files as input. But this is more a manual process used when working with sample data or really small dataset. If we plan to use BICS in a production environment, we need something more resilient that can be scheduled and that handles errors. We could use Data Sync but maybe you don't want to introduce a new tool. Luckily, BICS also provides a RESTful web service API we can use to load data. Again, ODI is the perfect tool to create our own reusable components to integrate with new sources or targets. And once again, Ayush did all the job for us as described in this article.

The first step is similar to what we did previously, we need make all the drivers and libraries available for ODI Studio and the agent. The steps in the two Knowledge Modules contains Jython code, calling some java methods from the odi-bics.jar file. These methods – just like the Oracle Storage Cloud Service java library – will use the Jersey Libraries to do the RESTful web service calls so we will need them as well. Basically, just take all the jar files from the archive you can find on java.net under the name "RKM and IKM for Oracle BI Cloud Service". As mentioned above, we need to add it to the classpath of ODI Studio and the agent installs and restart them.

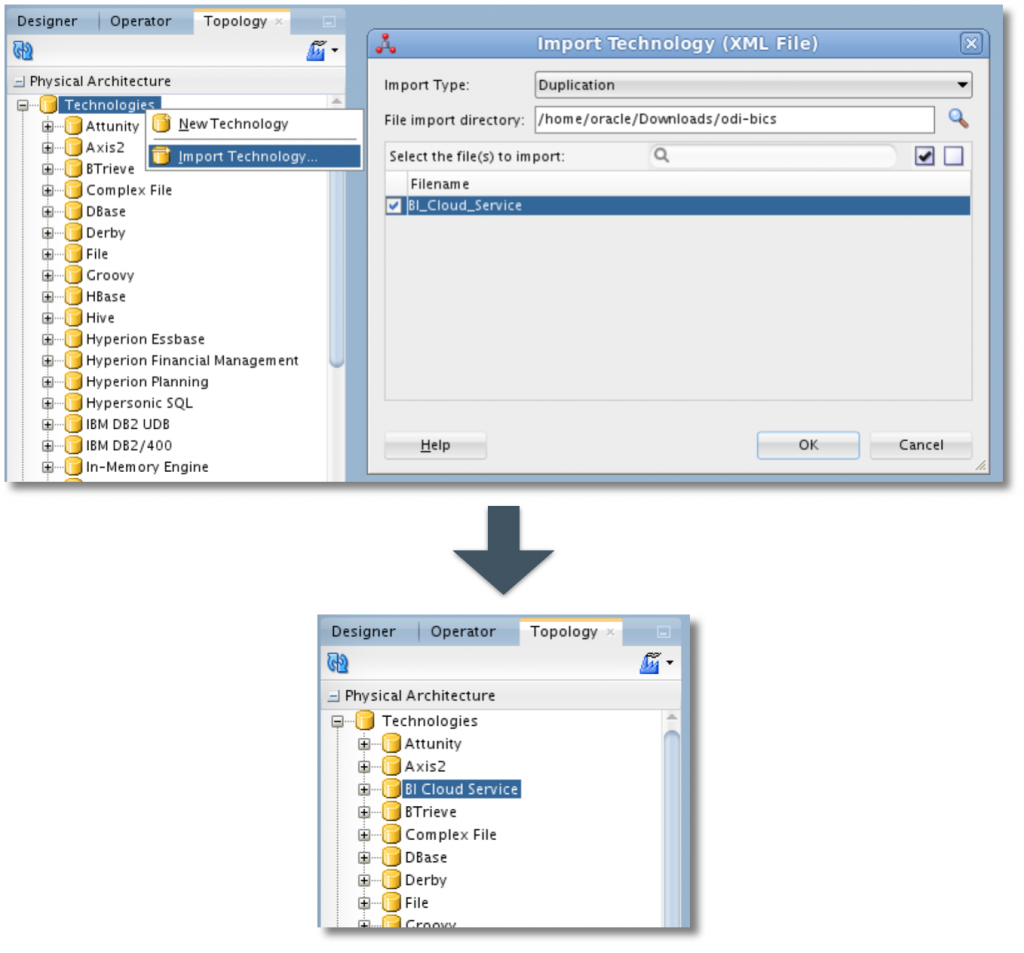

The article mentions that we need to create a new Technology for BI Cloud Service but an XML export is already created in the archive. Go in the Topology, right click on Technology and import the xml file.

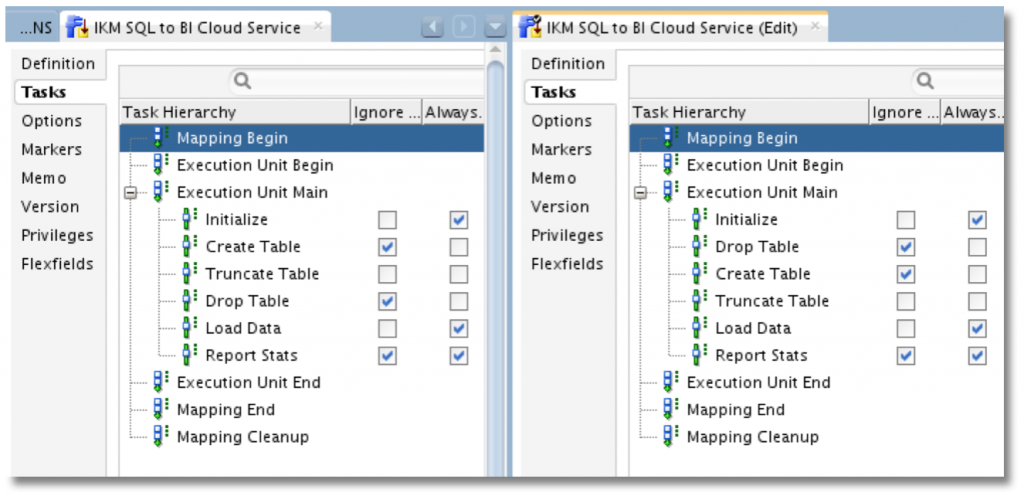

We can also import the two Knowledge Modules, either globally or under a single project. Ayush wrote one RKM to reverse-engineer the tables from our BICS schema and one Multi-Connections IKM to integrate the data from the on-premise database to the BICS schema in the cloud. I had to tweak the IKM a little bit but it might be linked to my particular setup. The steps were not displayed in the order it was set in the XML file and I had to reorder it properly to place the drop before the create and truncate. Probably a bug, I will investigate that later.

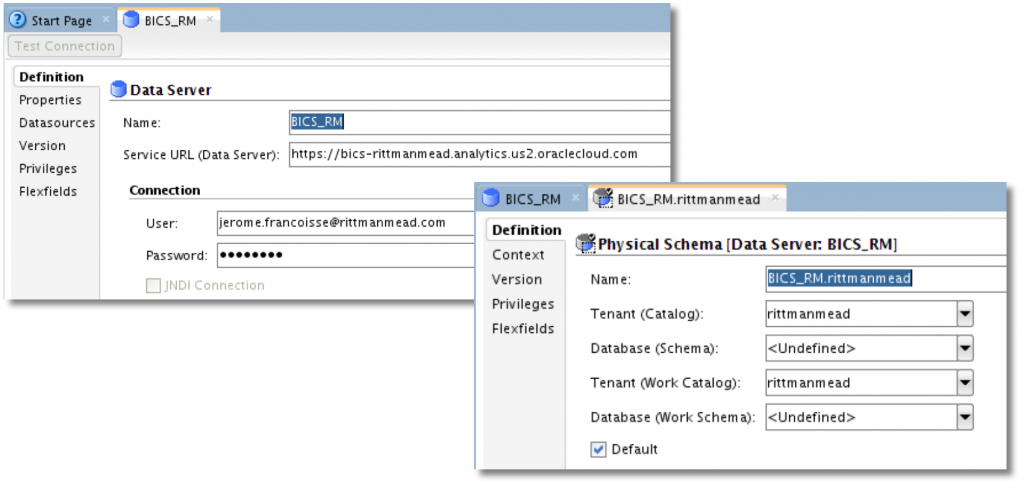

We are done with the install of new components and we can now set up the topology. First create a Dataserver with the newly imported technology and provide a login/password and the URL to the BICS instance as the Service URL. From my testing and unlike Ayush's screenshot, it should start with https and should not include the port number. Of course, we need to create a physical schema where we need to provide our Identity Domain in both Tenant fields. We can leave the Database fields empty. The last step to do in the Topology is to create a Logical schema and associate it to the Physical schema through a context.

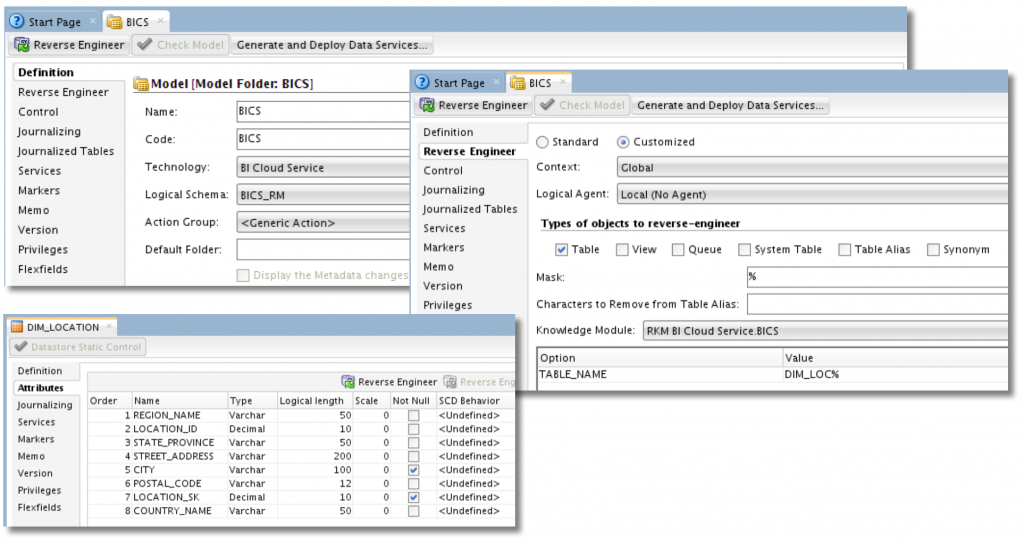

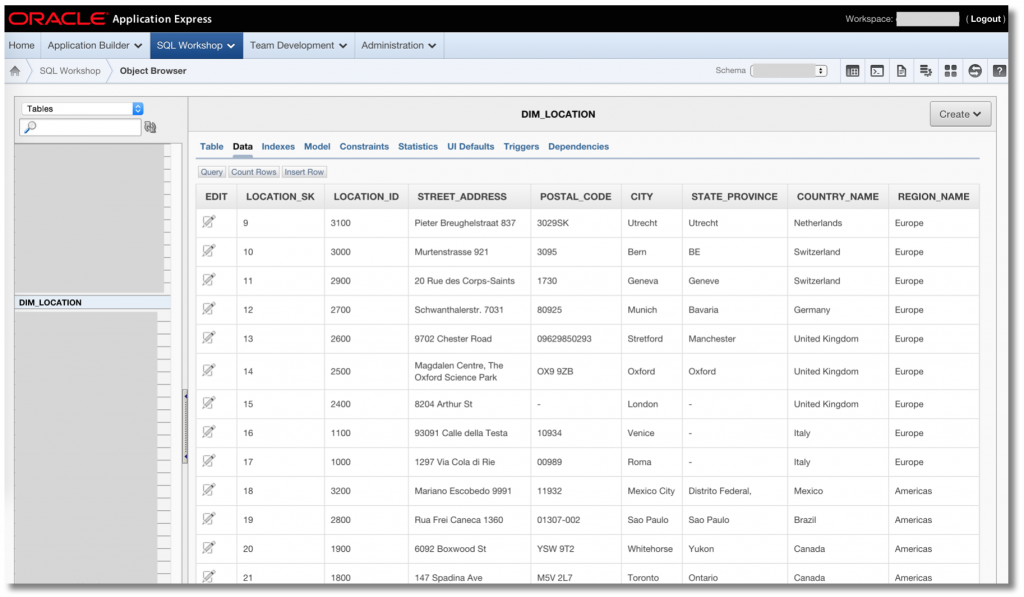

We can then switch to the Designer tab and create a new Model with BI Cloud Service technology and that Logical Schema. On the Reverse Engineer tab we select Customized and the RKM BI Cloud Service. We can also specify a mask to restrict the metadata import to specific table names before hitting the Reverse Engineer button at the top. I set the mask to DIM_LOC% and only my DIM_LOCATION as been reverse engineered from the BICS schema.

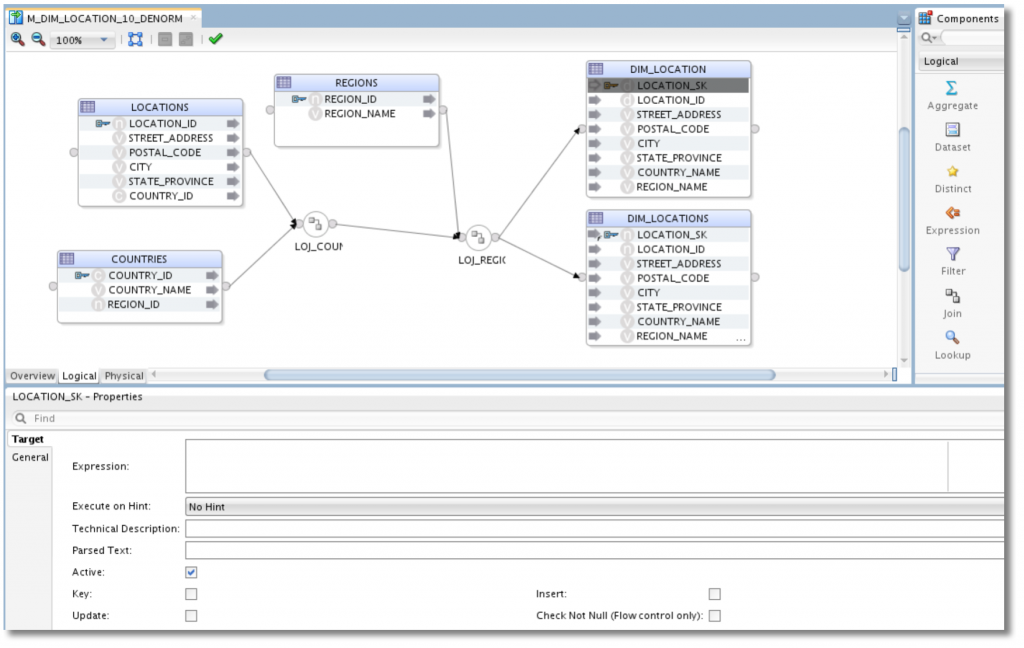

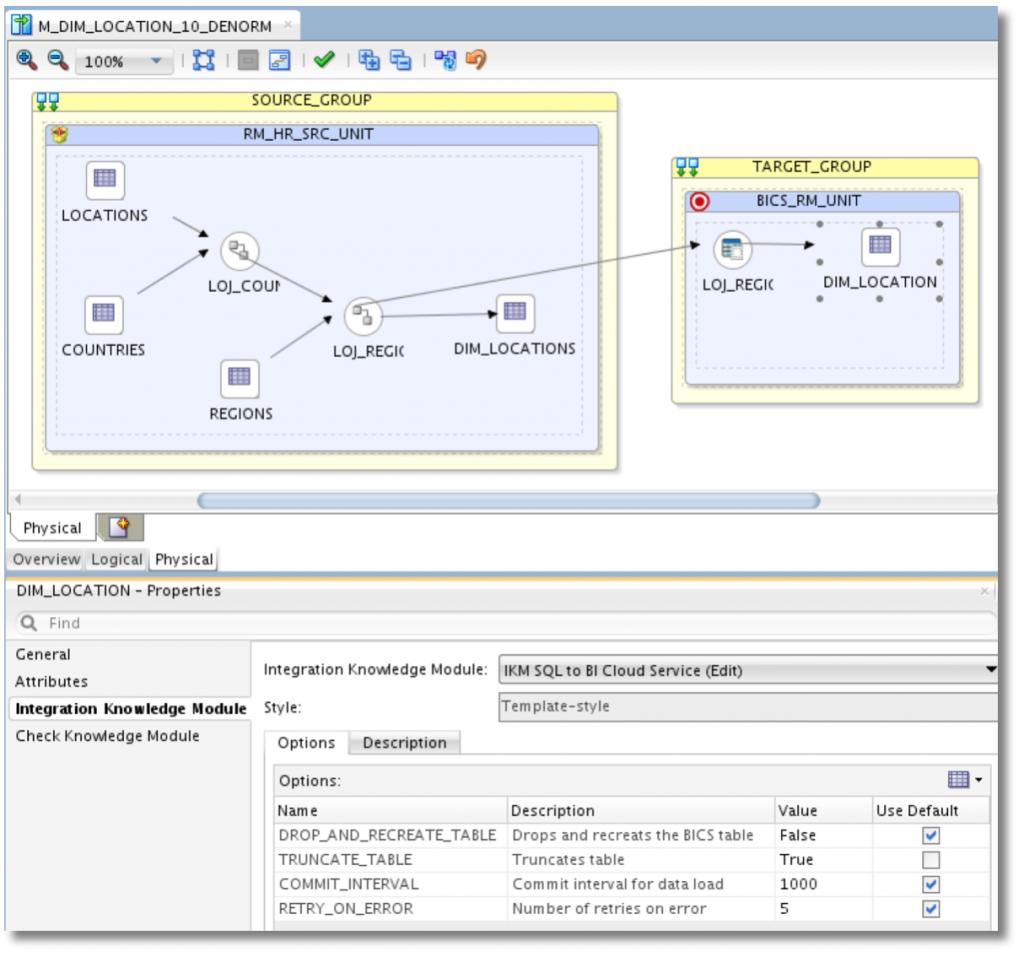

Finally, we can create a mapping. In this example I load two target tables. DIM_LOCATIONS (plural) is sitting on an on-premise Oracle Database for users accessing OBIEE from our HQ while DIM_LOCATION (singular) is in the schema linked to the our BICS instance used by remote users. There is nothing special here, except that I unselected the insert and update checkboxes for my surrogate key (LOCATION_SK) because I asked BICS to automatically populate it when I created the table – under the scene, a sequence is created and a trigger added to the table to populate the field with the next value of the sequence on each insert.

On the Physical tab, the LKM should be set on LKM SQL Multi-connect because we will delegate the data transfer to the Multi-Connections IKM. This IKM is the one we imported : IKM SQL to BI Cloud Service. I choose to enable the truncate option here.

Let's hit the execute button and watch the result in the operator and in our BICS schema.

The two articles demonstrate one more time how easy it is to plug a new technology within ODI. This tool is a wonderful framework that can be used to move data from and to any technologies, even if it's Big Data or Cloud as we have seen here. By having every integration jobs happening in the same place, we can have an easier maintenance, a better monitoring and we can schedule all the jobs together. It's also way easier to see the big picture in our projects.

If you have any question about this post feel free to reach me, we love that kind of interesting challenges at Rittman Mead.

Thanks a lot to Ayush for such good components and libraries he provided. I'm glad I will share the stage with him and a fellow ODI expert, Holger Friedrich, at the Oracle Open World. We will speak about Best Practices for Development Lifecycle Management . Come to see us and say hi!