Customisations in BI Apps 11.1.1.7.1 Part 2 : Category 2 Changes

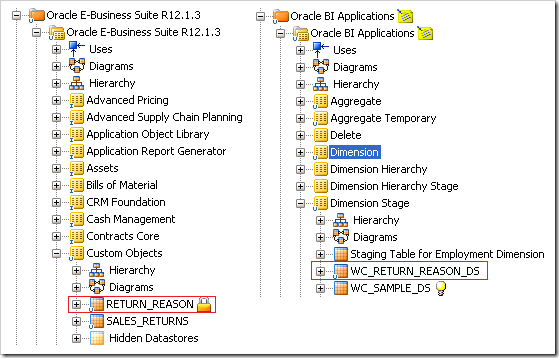

In the last blog I went through a very basic customisation, adding a new column to an existing dimension table. That served as an overview for how the BI Apps mappings are packaged in ODI for use in a dynamic ETL. This blog will go through the creation of a dimension and fact table, illustrating some of the concepts used to maintain data integrity. Most of these are similar to concepts used in previous releases of BI Apps, but modified for use in the new tool. For this example I will be adding two new tables to an existing EBS solution and running it as a single ETL load. The first is a dimension based on RETURN_REASON, the second is a fact based on SALES_RETURNS.

The first step is to create the source and target table definitions in the ODI model if they don’t already exist. Remember that you can just specify the table name and then use the Reverse Engineer feature to get the columns. The only constraint is that the table definitions are made in the correct models, but it’s worth grouping them into sub-models so that they can be navigated easily.

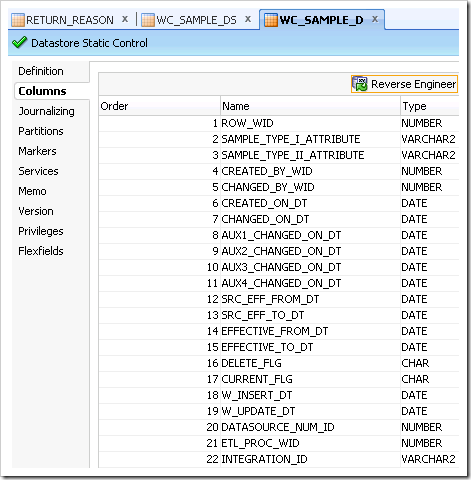

There are sample tables seeded in the repository for dimensions, staging tables and facts. These tables indicate the recommended naming convention (prefixing with WC_ instead of W_) as well as required system columns for warehouse tables. Below is a screenshot of the columns from the sample dimension table. All of these were included in tables for previous releases of BI Apps.

- ROW_WID: Surrogate key for dimension tables.

- INTEGRATION_ID: Natural key from the source table. Is often a concatenation of keys when several tables are used to populate a dimension.

- DATASOURCE_NUM_ID: The identifier of the source system the data was extracted from. This allows for multiple sources to populate the same warehouse table without conflict.

- ETL_PROC_WID: Run identifier for the load.

- EFFECTIVE_FROM_DT/EFFECTIVE_TO_DT: These can be used to enable SCD type 2 dimensions.

- CREATED_ON_DT/CHANGED_ON_DT: These dates (and all of the auxiliary changed dates) are from system columns on the source system. These are used to extract only newly changed information. The auxiliary dates can be used to improve this logic to derive from several tables.

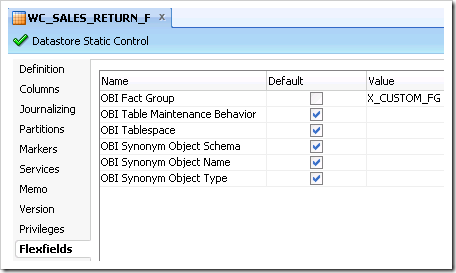

- Flexfield OBI Fact Group can be set on fact tables to link them to configuration groups.

- Staging tables are identified from the naming convention, e.g. XX_D will assume a staging table of XX_DS.

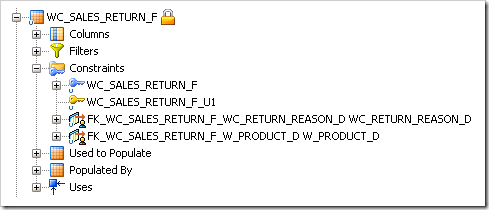

- Required dimensions for a fact are identified by reference constraints defined in the ODI model.

There is a fact group X_CUSTOM_FG which is included in each functional area. It is recommended that generic customisations are included in this group. You can set this on the datastore definition as above. In addition to this create various constraints on the new datastores.

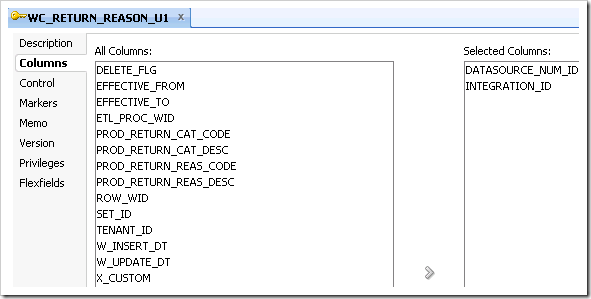

- Staging Tables: Primary Key over INTEGRATION_ID and DATASOURCE_NUM_ID

- Dimension Tables:

- Primary Key over ROW_WID

- Alternate Key over INTEGRATION_ID and DATASOURCE_NUM_ID

- Fact Tables:

- Primary Key over ROW_WID

- Alternate Key over INTEGRATION_ID and DATASOURCE_NUM_ID

- References for each foreign key to the ROW_WID of the parent table

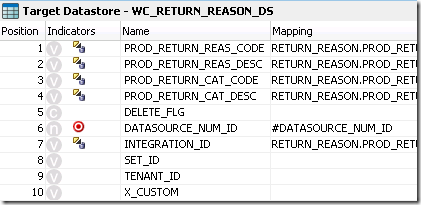

After the datastores are configured, it’s time to create the SDE interfaces and packages. Create these in the Custom_SDE folder as well so it’s separate from any prebuilt logic. Most of the columns can map across directly but it is important to use the global variable for DATASOURCE_NUM_ID. Variables are referenced by prefixing with # but also can be inserted using the GUI expression editor.

The other important thing for the SDE mappings is to add a filter for the extract logic. Previously, this was done using two workflows and overriding the logic on one of them. Now we only need one interface as we can use a global function (seeded in the repository) to perform the logic. The logic used in the example is as follows:

RUN_FULL_INCREMENTAL("#IS_INCREMENTAL",(RETURN_REASON.CREATION_DATE > TO_DATE_VAR('#INITIAL_EXTRACT_DATE')),(RETURN_REASON.LAST_UPDATE_DATE > TO_DATE_VAR('#LAST_EXTRACT_DATE')))

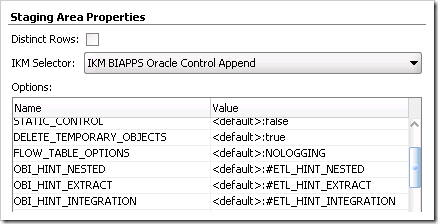

where #IS_INCREMENTAL is derived from querying a system table: W_ETL_LOAD_DATES. Once the mappings are made, they should be included in a package which refreshes the IS_INCREMENTAL and LAST_EXTRACT_DATE variables first. This is typical of all the extract mappings and can be made by just dragging the necessary objects across, defining one of the variables as the first step and joining them using the green connectors. For all staging mappings, choose the BI Apps Control Append IKM in the flow tab of the interface designer. There are BI Apps versions of all the default IKMs which have some additional features.

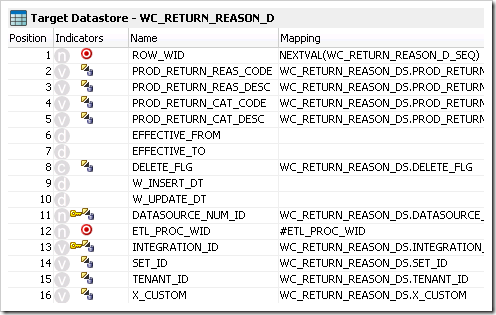

The SIL mappings are created in much the same way, but require an update key to be selected. It’s important that this is the key defined over the INTEGRATION_ID and DATASOURCE_NUM_ID. The update key can be set on the target datastore properties. In order to populate the ROW_WID, a sequence needs to be created. The prebuilt mappings all use native sequences stored in the data warehouse. This can then be imported into ODI and referenced by using NEXTVAL(SEQUENCE_NAME).

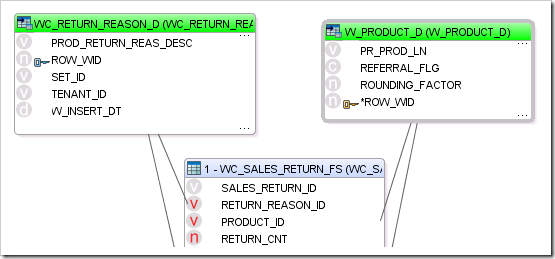

The other main difference for the SIL mappings is that they use the BI Apps Incremental Update or BI Apps Slowly Changing Dimension IKMs. For dimensions, the IKM has a useful option to insert the unspecified row automatically. For fact mappings (and some dimensions) it will be necessary to perform lookups. This procedure is done very simply by clicking the magnifying glass icon in the interface designer. That will open a wizard which allows you to select the table and the join condition. After that, any column from the lookup table can be used in the target expressions.

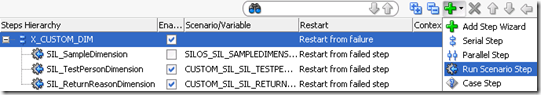

The SIL interfaces also need to be put into packages although only the IS_INCREMENTAL variable is required for refresh. Once all of the packages have been created, scenarios need to be generated for each of them. This can be done for each package at once by choosing generate scenarios at a higher folder level. Existing packages will be regenerated. These new scenarios need to be added to the master load plan, in the X_CUSTOM plans for SDE Dims, SDE Facts, SIL Dims and SIL Facts. Add the step by selecting the highest level and choosing Run Scenario step for the add menu. Then set the restart mode to Restart from failed step.

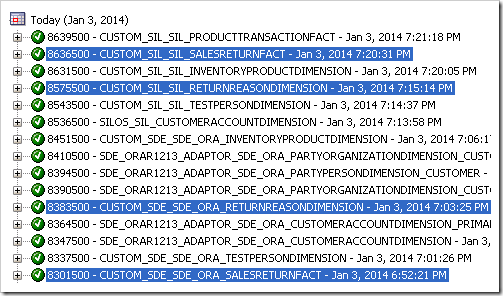

Once all of this has been done, the load plan must be edited to include the X_CUSTOM_FG fact group. This is done through the configuration manager where the plan can also be regenerated. After running the plan, I could see all of the tasks being included in the appropriate order. The data was successfully loaded into the fact table, with the foreign keys resolving.

That concludes the guide to customisations in the new BI Apps. Hopefully it was helpful with the overall process of how to do these customisations and why some of the steps are necessary. The Oracle documentation is very thorough and is certainly worth a look for some of the finer details. A lot is in common conceptually to previous BI Apps releases, the only step is the new tool which gives some very good new features.