Can Endeca Provide an Alternative to OBIEE for Fast, Complex Ad-hoc Analysis?

Towards the end of last week I was pulling together an article for Oracle Magazine on Oracle Endeca Information Discovery, and how it can now use OBIEE's BI Server and repository as an additional data source. I won't go into the details of that connectivity now as it'll be covered in the article itself, but one of the reasons I wanted to write this column was because of the dilemma I outlined in this blog post earlier in the month - is it possible to provide an ad-hoc query tool that gives users ultimate flexibility in how they create the report, but always guarantees sub-second response times. In that blog post, and in the Kevin McGinley blog post that inspired it, I speculated that Endeca Information Discovery 2.x might be able to provide that combination of features and I was keen to try this out in a "real-world" reporting scenario. If you're new to Endeca and want to do a bit of background reading first, check out our Endeca focus area and these particular postings on the Endeca technology stack:

- Oracle Endeca Week : So Just What Is Endeca?

- Oracle Endeca Week : What is the Endeca MDEX Engine?

- Oracle Endeca Week : Where Does Endeca Fit with Oracle BI and DW?

- Oracle Endeca Week : How Do You Develop Endeca Latitude Applications?

- Creating an Oracle Endeca Information Discovery 2.3 Application Part 1 : Scoping and Design

- Creating an Oracle Endeca Information Discovery 2.3 Application Part 2 : Preparing and Loading Data

- Creating an Oracle Endeca Information Discovery 2.3 Application Part 3 : Creating the User Interface

Update 25-Mar-2013: Endeca Information Discovery 3.0 is now out, and you can read about my first impressions of this updated release here.

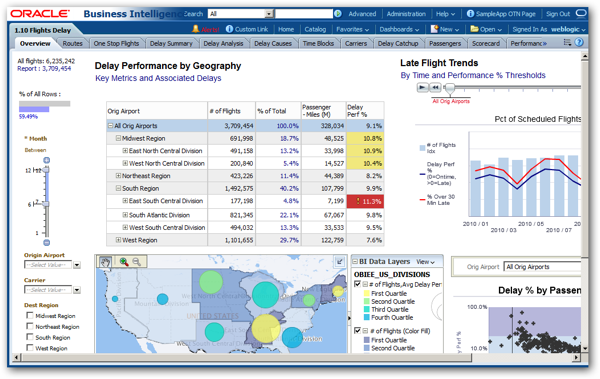

So in the article, I used the OBIEE 11.1.1.6 v207 SampleApp as my data source, in particular the Airline Flight Delays dataset that exists in cut-down form in this VirtualBox VM, and in expanded form on our Exalytics demo server. The screenshot below shows this dataset in an OBIEE dashboard, that then allows the end-user to analyze flight statistics and associated delays over a number of years using graphs, pivot tables, maps and other interactive visualisations.

All of this works well, and a set of pre-built summary tables that also come with the VM ensure that the reports in the demo run fairly fast - as you'd expect from a demo system provided by Oracle to showcase the tool. But if you go outside of the dashboard and use the analysis editor to create a new report, particularly one that involves lots of subtotals, time-series functions, outer joins across multiple fact tables and so forth, then it can sometimes be many seconds, maybe minutes before it returns data - basically, if you try hard enough then you'll construct a query that returns results too slow for a demanding user. I had this issue a couple of weeks ago when I visited a client looking to upgrade from OBIEE 10g to 11g, and who were trying reconcile what appeared to be two contradictory aims - provide a reporting environment where the user could request just about anything, however complex or expensive the query, and the expectation was that the answer would come back straightaway.

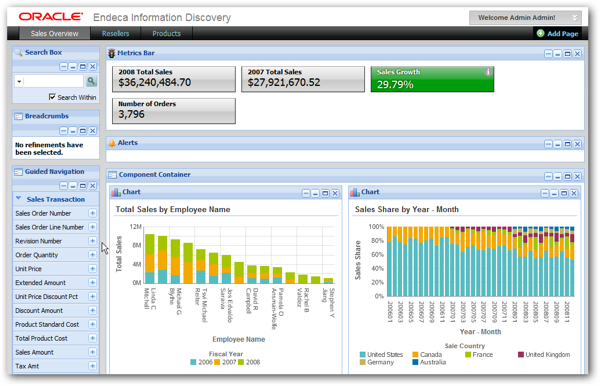

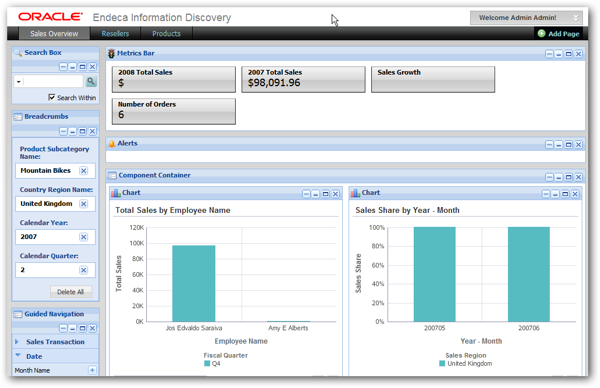

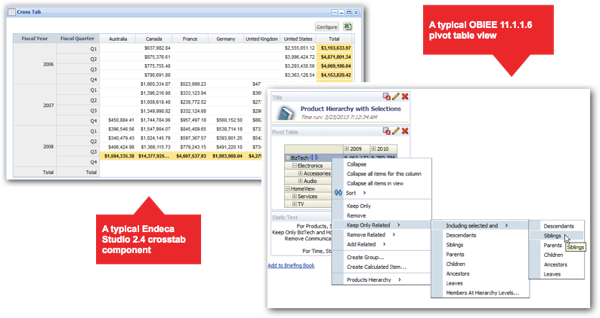

Of course in reality it's a tough call to do this consistently with OBIEE, but could Endeca Information Discovery meet this requirement, with its in-memory, column-store search analytic database back-end and it's web-based dashboard interface? Looking at the screenshot below from the Quickstart demo application that comes as part of the Endeca Information Discovery 2.4 download, it's not a million miles away from an OBIEE dashboard, and if it provides guaranteed split-second response times, could it be a viable replacement for Answers, or at least an option that we could turn to for a particular type of user?

It's worth saying at this point that Oracle, quite clearly, don't position Endeca Information Discovery as a replacement or upgrade for OBIEE; instead, as I explain in this presentation from last year's Oracle Openworld, Oracle position Endeca Information Discovery in the following way:

- Primarily, as a BI tool that enables analysis of unstructured and semi-structured data, as well as more traditional structured (measures, dimensions etc) datasets

- As a "data discovery" tool, through its ability to bring together loosely-related datasets and analyse them using search and lexical analysis tools

- As an agile, rapid application development tool for BI, because the key/value-pair record-orientated database provided by the Endeca Server requires little upfront data modelling, using a "schema on read" approach rather than the traditional "schema on write" used by relational databases and OBIEE's semantic layer.

But … Endeca has a number of properties that might be of interest to more traditional BI users looking for in-memory ad-hoc analysis:

- The in-memory key-value store database it uses doesn't have the same costs around data manipulation, table joins and disk access that traditional databases have, and the column-based storage it uses is particularly suited to selecting from sets of dimension members

- The search-and-click-orientated interface might actually suit users better now, given that everyone uses Google and tools such as QlikView have taken off

- It's got a good range of data visualisation components, and a web-based dashboard that appears to do a similar job to OBIEE's interactive dashboard.

So it seemed a good opportunity to take some data from an OBIEE 11g repository, load it up into an Endeca Server datastore, and see how well it actually worked as a replacement for Answers, even though this isn't what the product was intended for.

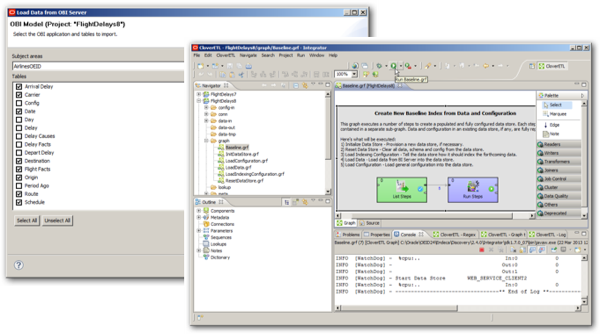

The article goes through the steps loading up the Endeca Server datastore through a connection to OBIEE's BI Server, and it works pretty well although I do have to create a special version of the SampleApp Flight Delays business model that removes the numbering from the business model tables, as Endeca doesn't allow numbers as prefixes for its datastore attribute names. I took most of the tables from the Flight Delays subject area, and all of the rows, with the new BI Server integration feature creating a skeleton project for me in Endeca Information Discovery Integrator.

The article then goes on to create a basic dashboard, using a few search and guided navigation components on the left-hand side of the dashboard page, and a selection of basic visualisations on the right, looking something like this:

So far so good, and for an article whose focus is mainly around the Endeca-OBIEE integration piece, it illustrates the feature well. But how well does this work as an ad-hoc query tool, how fast are the queries, and how might a typical end-user get on trying to create their own reports, graphs and other visuals?

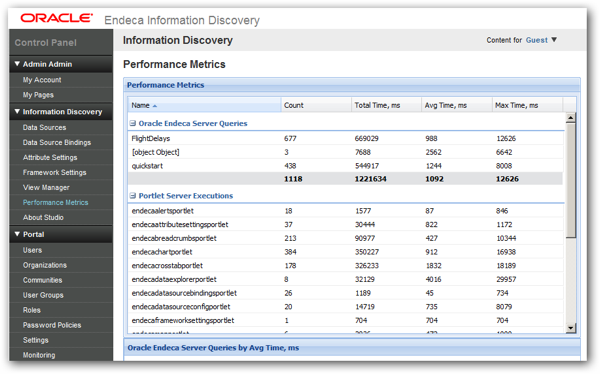

Initial results were encouraging; response times were consistently fast, albeit within the limited, controlled environment provided by the dashboard. All interactions were really in the form of filtering and searching of data, but as you can see from the performance stats below provided by the Endeca Information Discovery Studio control panel, all response times on all dashboards were under a second.

Compare this with similar stats for the OBIEE dashboards and analyses, and whilst most queries also came in around a second or so, some of them, particularly the more complex ones (and, ironically, the ones using data from TimesTen) were ten, fifteen or more seconds on average, and this is for a system with predefined queries, aggregate tables and most probably, caching enabled as well. Loading the SampleApp flight delays data into Endeca took around 20 minutes or so and took up around 600MB of memory on the VM, so based on this initial test, Endeca looks an interesting alternative to Answers and a regular RBDMS back-end database.

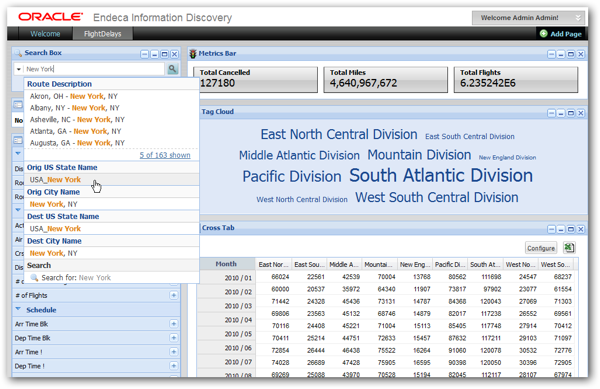

But it's when you come to use Endeca Information Discovery's dashboard as an ad-hoc reporting tool that you begin to see the limitations, at least with Studio, the current web-based front-end for the Endeca Server. Going back to the Quickstart dashboard for a moment, the basic analysis process that an end-user would use with this tool would be as follows:

- Use the search and guided navigation tools to identify, and then focus in-on, the records that are of interest to you

- Then, using the graphing, tabular, tag cloud and other visualisations, aggregate and analyse the measures within that subset of records

To take an example, you might use the search and guided navigation tools in Quickstart to focus-in on mountain bike sales, in the UK, made in the second quarter of calendar year 2007, and once you've done this you would see a dashboard page looking like the one below.

The Endeca Server engine is good at aggregating data on the fly, and as the whole dataset should be held in-memory, results should return fast. In fact, this dashboard doesn't look a million-miles away from an OBIEE dashboard, with the Breadcrumbs component on the left looking just like a set of dashboard prompts on an OBIEE dashboard. And this works pretty well, with the main issue then coming from how suitable a tool Studio is for users creating their own reports.

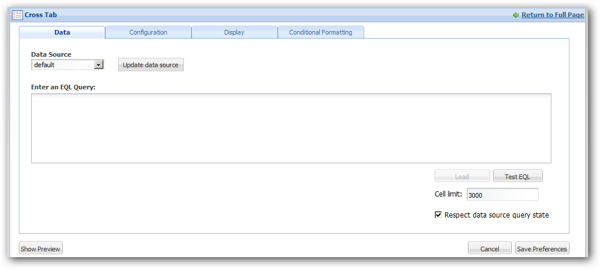

For example, going back to the dashboard I created for the Oracle Magazine article, suppose I wanted to add a cross tab (pivot table) component to the dashboard. Selecting it from the list of new components is easy enough, but then the first dialog you're presented with when you come to configure the rows and columns is this one:

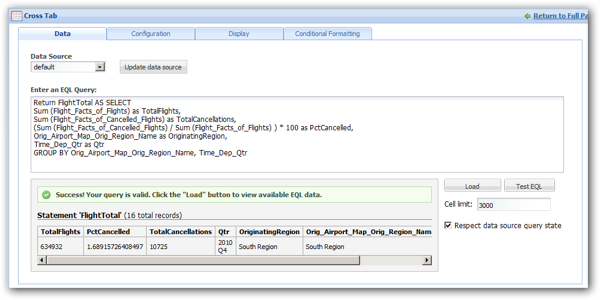

errrrr … what's an EQL query? In fact, EQL is much like OBIEE's logical SQL and therefore easy to write, but you still need to know the names of attributes, the format aggregation functions need to take, and of course the particular syntax EQL uses. In this example, to create a cross tab that breaks down flights by the percentage that cancel over region and quarter, the EQL would look something like this:

Once you know EQL, and particularly if you've got a copy of Endeca Information Discovery Integrator open in front of you with the attribute names listed, it's not exactly rocket-science, but its' still a process more like creating ADF Data Visualization Components than working with OBIEE's Analysis Editor. According to reports around the upcoming 3.0 release of Endeca Information Discovery, the tabular and crosstab components are being reworked to presumably make them easier for end-users to set up, but where we are now is a long way from the usability of tools like the OBIEE Analysis Editor or even Microsoft Excel.

Once the crosstab is configured and on the screen, options are limited for hierarchy drilling, pivoting, subtotalling and all the other pivot table-style operations that "power users" would expect to see. Attributes, equivalent to columns in OBIEE's subject areas, can be nested within each other, but there's no equivalent to OBIEE 11g's row and column-swapping, selection steps, calculated items and groups, and all the other pivot table features that power users require, and that are typically the cause of the expensive and complex SQL you sometimes see associated with an OBIEE analysis. The screenshot on the left below shows a typical Endeca Information Studio crosstab whilst the one on the right shows a pivot table from OBIEE 11.1.1.6, featuring right-click interactions to further manipulate the report dataset after its returned from the underlying database.

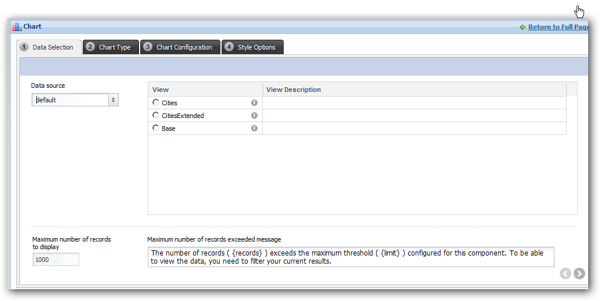

Its a similar story around charts in Endeca Studio. Adding a chart component to a dashboard page and selecting the Properties dialog this time requires that you select from a list of "views", rather than type in an EQL query, with the average end-user probably not knowing what a view is or how to get hold of one.

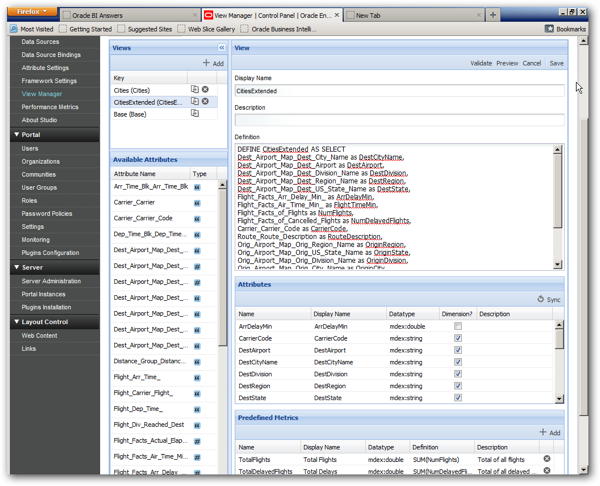

Views are in fact metadata objects within Studio that allow you to define the equivalent of a database view over records and attributes in the datastore, designating some of the attributes as "dimensions" and others as "measures". Except the dimensions are more like member lists, with no obvious place to define hierarchies or any of the other structures associated with OLAP-style reporting. Views are actually quite cool and once you get the hang of them, a great additional to building Studio applications, but it's another technique to learn compared to creating crosstab reports, and with the creation of the view we're starting to get into RPD and catalog-style territory which isn't probably where you want to be end-users spending their time.

Update 24-March-2013: Oracle Endeca Information Discovery 3.0 has now been released, and the Crosstab component (now renamed Pivot Table component) now uses the same "views" datasource as Chart components, taking away the need to type in raw EQL when defining a crosstab component's underlying query.

Another issue is a bit more subtle and concerns how the Endeca Server arranges data in the record-based datastore. When you load data into an Endeca Server datastore, you effectively denormalize all the input tables and files into a single large "table" of records, with each record being made up of a number of attributes (used for data selection) and measures (also attributes, but typically aggregated and displayed in numeric form on reports). This works reasonably well if you're just loading the equivalent of a single fact table and its dimensions into a datastore, with the datastore records taking on the granularity of fact table rows or transactions in your source system. But typically, OBIEE users want to run queries that span multiple fact tables, and once you start trying to mix in fact and transaction sources of differing granularity, you start having to use workarounds and partner solutions such as this in order to have it make some sort of sense to the end user.

None of this is meant to imply any criticism of Endeca Information Discovery, or indeed to let OBIEE off-the-hook; Endeca was designed first and foremost as a data discovery tool, in a way the visualisations and analytics are a bonus, and Oracle have clearly stated that the graphing and analysis elements of the product are one of the major areas of the product they're looking to improve, probably making more use of Oracle's own data visualisation components and presumably moving away from LifeRay portal and Tomcat, on which Endeca Studio is built. But it's no good comparing the relatively simplistic Endeca data analysis components with the more complex ones that OBIEE uses and saying they are faster, because it's precisely those extra features that OBIEE's components use that make their queries run slower - who knows how well Endeca queries would run given a query of a similar complexity?

I think what this exercise said to me is that, whilst the Endeca Server engine is most probably an excellent in-memory back-end for an ad-hoc query tool, the current generation of end-user reporting tools that come with the product just aren't up to the standard that OBIEE power-users would require, and instead clearly they're more aimed at developers who will build a dashboard and then either use it themselves, as part of a data discovery exercise, or give it to users to use in a "locked-down" form. And this is the key thing - if we're considering Endeca as an alternative to OBIEE for these "power users", they're going to want to create their own reports, manipulate their own data and so forth, and clearly not having this ability will more or less make it unusable for them. Of course, the issue is compounded with Endeca in that the only interface into the Endeca Server datastore is via web service calls, rather than ODBC or JDBC, so Studio is more or less the only game in town until Oracle either make the interface more standard, or start to use OBIEE technologies as a way of analysing and visualising Endeca data.

So … close, but realistically a non-runner due to usability issues. If the requirement really is high-speed ad-hoc analysis of data, your best bet is still probably Essbase or OBIEE ideally in conjunction with Oracle Exalytics, but one wonders what Oracle have planned in terms of products to meet this need - some sort of combination of Endeca (for in-memory filtering and navigating across all types of data), Essbase (for aggregation and calculation) and OBIEE (for scalability, metadata management and data federation) combined with the best of each tools' front-end capabilities would be very interesting - a kind of "Project Fusion" for BI, if you were.